|

|

KVM I/O slowness on RHEL 6

http://www.ilsistemista.net/index.php/virtualization/11-kvm-io-slowness-on-rhel-6.html?limitstart=0

Over one year has passed since my last virtual machine hypervisor comparison so, in the last week, I was preparing an article showing a face to face comparison between RHEL 6 KVM technologies versus Oracle VirtualBox 4.0 product. I spent several days creating some nice, automated script to evaluate these two products under different point of views, and I was quite confident that the benchmark session would be completed without too much trouble. So, I installed Red Hat Enterprise Linux 6 (license courtesy of Red Hat Inc. - thank you guys!) on my workstation and I begin the virtual images installation.

However, the unexpected happened: using KVM, a Windows Server 2008 R2 Foundation installation took almost 3 hours, while normally it should be completed in about 30-45 minutes. Similarly, the installation of the base system anticipating the “real” Debian 6.0 installation took over 5 minutes, when normally it can be completed in about 1 minute. In short: the KVM virtual machines were affected by awfully slow disk I/O subsystem. In previous tests, I saw that KVM I/O subsystem was a bit slower, but not by so much; clearly, something was impairing my KVM I/O speed. I tried different combination of virtualized disk controllers (IDE or VirtIO) and cache settings, but without success. I also changed my physical disk filesystem to EXT3, to avoid any possible, hypothetical EXT4 speed regression, but again with no results: the KVM slow I/O speed problem remained.

I needed a solution – and a real one: with such awfully slow I/O, the KVM guests were virtually unusable. After some wasted hours, I decided to run some targeted, systematic tests regarding VM image formats, disk controllers, cache settings and preallocation policy. Now that I find the solution and I run my KVM guest at full speed, I am very happy – and I would like to share my results with you. Testbed and methods

First, let me describe the workstation used in this round of tests; system specifications are:

- CPU: Core-i7 860 (quad cores, eight threads) @ 2.8 GHz and with 8 MB L3 cache

- RAM: 8 GB DDR3 (4x 2 GB) @ 1333 MHz

- DISKS: 4x WD Green 1 TB in software RAID 10 configuration

- OS: Red Hat Enterprise Linux 6 64 bit

The operation system was installed with “basic server” profile and then I selectively installed the various other software required (libvirtd, qemu, etc). Key systems software are:

- kernel version 2.6.32-71.18.1.el6.x86_64

- qemu-kvm version 0.12.1.2-2.113.el6_0.6.x86_64

- libvirt versio 0.8.1-27.el.x86_64

- virt-manager version 0.8.4-8.el6.noarch

As stated before, initially all host-system partitions were formatted in EXT4, but to avoid any possible problem related to the new filesystem, I changed the VM-storing partition to EXT3.

To measure guest I/O speed, I timed the Debian 6.0 (x86_64 version) basic system installation. This is the process that, during Debian installation, immediately follow the partitions creation and format phase.

Let me thanks again Red Hat Inc. - and especially Justin Clift – to give me a free RHEL 6 license.

OK – I know that you want to know why on earth KVM I/O was so slow. However, first you had to understand something about caching and preallocation policies. On caching and preallocation

Note: in this page, I try to condensate in very little space some hard-to-explain concepts, so I had to do some approximations. I ask the expert reader to forgive me for the over-simplification.

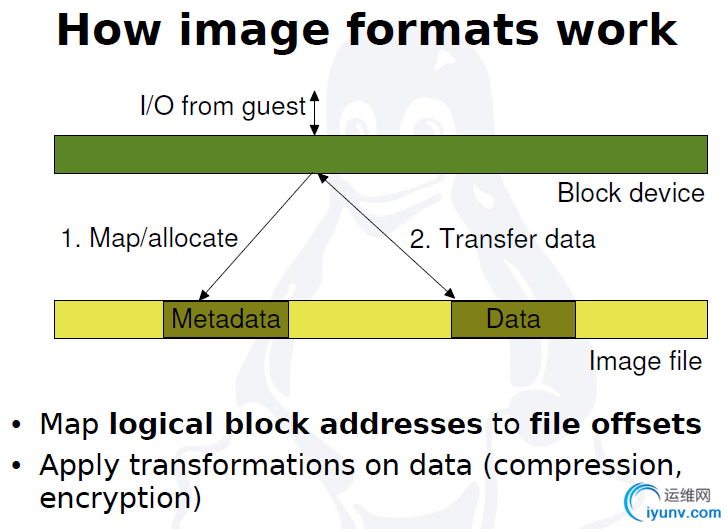

Normally, a virtual guest system use an host-side file to store its data: this file represent a virtual disk, that the guest use as a normal, physical disk. However, from the host view this virtual disk is a normal data file and it may be subject to caching and preallocation.

In this context, caching is the process to “hide” some disk-related data to physical RAM. When we use that cache to storein RAM only data previously read from the disk, we speak about a read cache, or write-through cache. When we store in RAM some data that will be later flushed to disk, we speak abut a write cache, or write-back cache. A write-back cache, by caching write request in the fast RAM, has higher performance; however, it is also more prone to data loss than a write-through one, as the latter only cache read requests and immediately write to disk any data.

As disk I/O is a very important parameter, Linux and Windows o.s. generally use a write-back policy with periodic flush to the physical disk. However, when using an hypervisor to virtualize some guest system, you can effectively cache things twice (one time in the host memory and another time in the virtual guest memory), so is often better to disable host-based caching on the virtual disk file and to let the guest system to manage its own caching. Moreover, a host-side write-back policy on virtual disk file significantly increase the risk of data loss in case of guest crash.

KVM let you choose one of these three cache policy: no caching, write-through (read only cache) and write-back (read and write cache). It also has a “default” setting that effectively is an alias for the write-through one. As you will see, pick the right caching scheme is a crucial choice for fast guest I/O.

Now, some words about preallocation: this is the process to better prepare the virtual disk file to store the data written by the guest system. Generally, preallocate a file means to fill it with zeros, so that the host system had to reserve in advance all the disk space assigned to the guest. In this manner, when the guest try to write to the virtual disk, it never waits for the host system to reserve the required space. Some time, preallocation does not fill the target file with zeros, but only prepare some its internal data structure: in this case, we talk about metadata preallocation. RAW disk format can use full preallocation, while QCOW2 actually use metadata preallocation (there are some patches that force full preallocation, but are experimental ones).

Why speak about caching and preallocation? Because the super-slow KVM I/O speed really boil down to this two parameters, as we are going to see. RAW image format performance

Let's begin with some tests regarding the most basic disk image format – the RAW format. RAW images are very fast, but they miss a critical feature: the possibility to take real, fast snapshot of the virtual disk. So, they can be used only in situations were you not need a real snapshot support (or you have snapshotting capability at the filesystem level – but this is another story).

RAW读写很快,但是snapshot比较慢

How the RAW format performs, and how caching affect the results?

As you can see, as long you stay away from write-through cache, RAW image have very high speed. Note that in RAW image with no caching or write-back policy, preallocation only have a small influence.

What about the much more feature-rich QCOW2 format? QCOW2 image format performance

The QCOW2 format is the default QEMU/KVM image format. It has some very interesting features, as compression and encryption, but especially it enable the use of real, file-level snapshots.

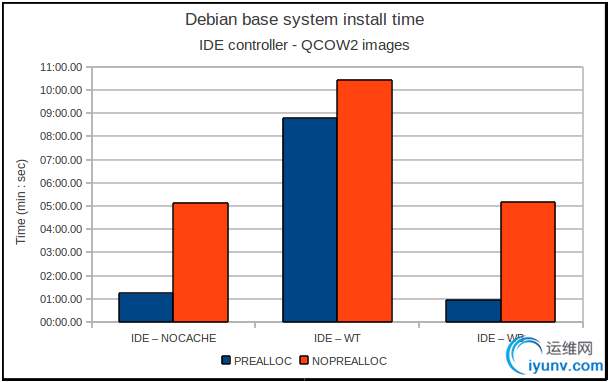

But how it performs?

Mmm... without metadata preallocation, it performs very badly. Enable metadata preallocation, stay away from write-through cache and it perform very well.

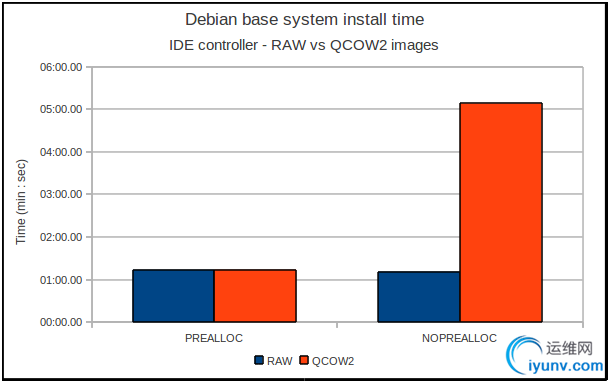

To better compare it to the RAW format, I made a chart with the no-caching RAW and QCOW2 results:

While without metadata preallocation the QCOW2 format is 5X slower then RAW, with enabled metadata preallocation the two are practically tied. This prove that while RAW format is primarily influenced by caching setting, QCOW2 is much dependent on both the preallocation and caching policies.

The influence of the virtualized I/O controller

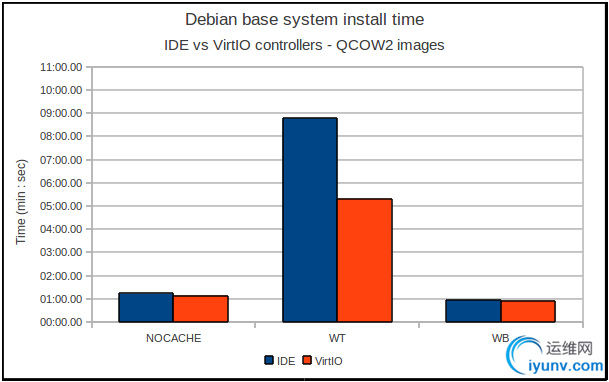

Another important thing to check is the influence of the virtualized I/O controller that is presented to the guest. KVM let you use not only the default IDE virtual controller, but also a new, paravirtualized I/O controller called VirtIO. This virtualized controller promise better speed and less CPU usage.

How it affect the results?

As you can see, the write-through scenario is the most affected one, while with the no-caching and write-back policies it has a lesser effect.

This does not means that the VirtIO is an unimportant project: the scope of this test was only to be sure that it don't comport any I/O slowness. In a following article I will analyze this very promising driver in a much more complete manner.

I/O slowness cause: bad default settings

So, we can state that to obtain good I/O throughput from the QCOW2 format, two conditions must be met:

- don't use a write-through cache

- always use metadata preallocation.

However, using the virt-manager GUI interface that is normally used to create virtual disks and guest systems on Red Hat and Fedora, you can not enable metadata preallocation on QCOW2 files. While the storage volume creation interface let you specify if you want to preallocate the virtual disk, this function actually only works with RAW files; if you use a QCOW2 file it does nothing.

To create a file with metadata preallocation, you must open a terminal and issue the “qemu-img create” command. For example, if you want to create a ~10 GB QCOW2 with metadata preallocation, you must issue the command “qemu-img create -f qcow2 -o size=10000000000,preallocation=metadata file.img”.

Moreover, the default caching scheme is the write-through one. While generally the guest creation wizard correctly disable host-side cache, if you later add any virtual disk to the guest, often the disk is added with the “default” caching policy – a write-through one.

So, if you are using Red Hat Enterprise Linux or Fedora Linux as the host operating system for you virtualization server and you plan to use the QCOW2 format, remember to manually create preallocated virtual disk files and to use a “none” cache policy (you can also use a “write-back” policy, but be warned that your guests will be more prone to data loss). Conclusions

First of all, don't let me wrong: I'm very exited about KVM and libvirt progresses. Now we have not only a very robust hypervisor, but also some critical paravirtualized drivers, a good graphical interface and excellent host / guest remote management capabilities. I would publicly thanks all the talented guys involved in the realization of these great and important projects – thank you boys!

However, it's a shame that the current virt-manager GUI interface don't permit to perform metadata preallocation on QCOW2 image format, as this image is much more feature-rich than the RAW one. Moreover, I would like to see not only the guest creation wizard, but all the guest editing windows to always default to no cache policy for virtual disk, but it is a secondary problem: it is not so difficult to manually change a parameter...

The first problem – no metadata preallocation on QCOW2 – is way more serious, as it can not be overcomed without resort to the command line. This problem should really be corrected as soon as possible. In the meantime, you can use the workaround described above, and remember to always check your virtual disk caching policy – don't use the “default” or “write-through” settings. I hope than this article can help you to get the max from the very good KVM, libvirt and related projects. |

|