|

|

[Hadoop 2.2 + Solr 4.5]系列之三:MapReduce + Lucene 生成Index文件

即上篇Hadoop2.2的配置与启动以来,我们这里就不过多的详解Mapreduce算法了,下面我们直接讲诉Mapred+Lucene。

1)、思路:

通过Map用来读取Hdfs文件,并在本地生成,最后将文件上传到HDFS上。仿照Nutch的代码。

Hadoop2.X貌似没有提供之前版本的eclipse插件,这里我们就直接通过eclipse进行编写Mapred程序,然后直接上传到Master.Hadoop中直接运行。

项目引用的jar包主要有Hadoop2.2的

hadoop-2.2.0\share\hadoop\

|--common\

|--lib\*.jar

|--hadoop-common-2.2.0.jar

|--hadoop-nfs-2.2.0.jar

|--hdfs\

|--hadoop-hdfs-2.2.0.jar

|--hadoop-hdfs-nfs-2.2.0.jar

|--mapreduce\

|--*.jar

|--tools\lib\

|--*.jar

|--yarn\

|--*.jar

Lucene4.5 的四个Jar

lucene-4.5.0\core\lucene-core-4.5.0.jar

lucene-4.5.0\analysis\common\lucene-analyzers-common-4.5.0.jar

lucene-4.5.0\queries\lucene-queries-4.5.0.jar

lucene-4.5.0\queryparser\lucene-queryparser-4.5.0.jar

2)、代码献上来:

HDFSDocument.java

package com.yu.mapred.lib;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

import java.util.HashMap;

import java.util.Iterator;

import org.apache.hadoop.io.Writable;

/*

* 自定义的一种hadoop输出类型,存储的内容是一个Map.

*/

public class HDFSDocument implements Writable {

HashMap fields = new HashMap();

public void setFields(HashMap fields) {

this.fields = fields;

}

public HashMap getFields() {

return this.fields;

}

@Override

public void readFields(DataInput in) throws IOException {

fields.clear();

String key = null, value = null;

int size = in.readInt();

for (int i = 0; i < size; i++) {

// 依次读取两个字符串,形成一个Map值

key = in.readUTF();

value = in.readUTF();

fields.put(key, value);

}

}

@Override

public void write(DataOutput out) throws IOException {

String key = null, value = null;

Iterator iter = fields.keySet().iterator();

out.writeInt(fields.size());

while (iter.hasNext()) {

key = iter.next();

value = fields.get(key);

// 依次写入两个字符串

out.writeUTF(key);

out.writeUTF(value);

}

}

}

View Code HDFSDocumentOutputFormat.java

package com.yu.mapred.lib;

import java.io.File;

import java.io.IOException;

import java.util.HashMap;

import java.util.Iterator;

import java.util.Random;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.FileOutputFormat;

import org.apache.hadoop.mapred.JobConf;

import org.apache.hadoop.mapred.RecordWriter;

import org.apache.hadoop.mapred.Reporter;

import org.apache.hadoop.mapred.lib.MultipleOutputFormat;

import org.apache.hadoop.util.Progressable;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.analysis.standard.StandardAnalyzer;

import org.apache.lucene.document.Document;

import org.apache.lucene.document.Field;

import org.apache.lucene.document.StringField;

import org.apache.lucene.index.IndexWriter;

import org.apache.lucene.index.IndexWriterConfig;

import org.apache.lucene.index.LogDocMergePolicy;

import org.apache.lucene.index.LogMergePolicy;

import org.apache.lucene.store.FSDirectory;

import org.apache.lucene.util.Version;

/**

* job.setOutputValueClass(HDFSDocument.class);

* job.setOutputFormat(HDSDocumentOutput.class);

*/

public class HDFSDocumentOutputFormat extends

MultipleOutputFormat {

protected static class LuceneWriter

{

private Path perm;

private Path temp;

private FileSystem fs;

private IndexWriter writer;

public void open(JobConf job, String name) throws IOException{

this.fs = FileSystem.get(job);

perm = new Path(FileOutputFormat.getOutputPath(job), name);

// 临时本地路径,存储临时的索引结果

temp = job.getLocalPath("index/_" + Integer.toString(new Random().nextInt()));

fs.delete(perm, true);

// 配置IndexWriter(个人对Lucene索引的参数不是太熟悉)

Analyzer analyzer = new StandardAnalyzer(Version.LUCENE_45);

IndexWriterConfig conf = new IndexWriterConfig(Version.LUCENE_45, analyzer);

conf.setMaxBufferedDocs(100000);

LogMergePolicy mergePolicy = new LogDocMergePolicy();

mergePolicy.setMergeFactor(100000);

mergePolicy.setMaxMergeDocs(100000);

conf.setMergePolicy(mergePolicy);

conf.setRAMBufferSizeMB(256);

conf.setMergePolicy(mergePolicy);

writer = new IndexWriter(FSDirectory.open(new File(fs.startLocalOutput(perm, temp).toString())),

conf);

}

public void close() throws IOException{

// 索引优化和IndexWriter对象关闭

writer.commit();

writer.close();

// 将本地索引结果拷贝到HDFS上

fs.completeLocalOutput(perm, temp);

fs.createNewFile(new Path(perm,"index.done"));

}

/*

* 接受HDFSDocument对象,从中读取信息并建立索引

*/

public void write(HDFSDocument doc) throws IOException{

String key = null;

HashMap fields = doc.getFields();

Iterator iter = fields.keySet().iterator();

while(iter.hasNext()){

key = iter.next();

Document luceneDoc = new Document();

// 如果使用Field.Index.ANALYZED选项,则默认情况下会对中文进行分词。

// 如果这时候采用Term的形式进行检索,将会出现检索失败的情况。

luceneDoc.add(new StringField("key", key, Field.Store.YES));

luceneDoc.add(new StringField("value", fields.get(key), Field.Store.YES));

writer.addDocument(luceneDoc);

}

}

}

@Override

protected String generateFileNameForKeyValue(Text key, HDFSDocument value,

String leaf) {

return new Path(key.toString(), leaf).toString();

}

@Override

protected Text generateActualKey(Text key, HDFSDocument value) {

return key;

}

@Override

public RecordWriter getBaseRecordWriter(

final FileSystem fs, JobConf job, String name,

final Progressable progress) throws IOException {

final LuceneWriter writer = new LuceneWriter();

writer.open(job, name);

return new RecordWriter() {

@Override

public void write(Text key, HDFSDocument doc) throws IOException {

writer.write(doc);

}

@Override

public void close(Reporter reporter) throws IOException {

writer.close();

}

};

}

}

View Code 以及Mapred主程序:

package com.yu.mapred.lib;

import java.io.IOException;

import java.util.Date;

import java.util.HashMap;

import java.util.Iterator;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.FileInputFormat;

import org.apache.hadoop.mapred.FileOutputFormat;

import org.apache.hadoop.mapred.JobClient;

import org.apache.hadoop.mapred.JobConf;

import org.apache.hadoop.mapred.MapReduceBase;

import org.apache.hadoop.mapred.Mapper;

import org.apache.hadoop.mapred.OutputCollector;

import org.apache.hadoop.mapred.Reducer;

import org.apache.hadoop.mapred.Reporter;

import org.apache.hadoop.mapred.TextInputFormat;

import org.apache.hadoop.mapreduce.filecache.DistributedCache;

@SuppressWarnings("deprecation")

public class TestLucene {

public static class MapHdfsDocument extends MapReduceBase implements

Mapper {

private Text word = new Text("mmmm");

public void map(LongWritable key, Text value,

OutputCollector output, Reporter reporter)

throws IOException {

HDFSDocument document = new HDFSDocument();

HashMap map = document.getFields();

map.put(key.toString(), value.toString());

output.collect(word, document);

}

}

public static class ReduceLuceneIndex extends MapReduceBase implements

Reducer {

public void reduce(Text key, Iterator values,

OutputCollector output, Reporter reporter)

throws IOException {

while (values.hasNext()) {

output.collect(key, values.next());

}

}

}

public static void main(String[] args) throws Exception {

String[] ars = new String[] { "/input/2013_10_21_00_00-2013_10_22_00_00.csv",

"/output/test_lucene_" + new Date().getTime() % 100 };

JobConf job = new JobConf(TestLucene.class);

job.setJobName("testmapred-lucene");

job.set("mapred.job.tracker", "Master.Hadoop:9001");

DistributedCache.addFileToClassPath(new Path("/jars/lucene-4.5/lucene-analyzers-common-4.5.0.jar"), job);

DistributedCache.addFileToClassPath(new Path("/jars/lucene-4.5/lucene-core-4.5.0.jar"), job);

DistributedCache.addFileToClassPath(new Path("/jars/lucene-4.5/lucene-queries-4.5.0.jar"), job);

DistributedCache.addFileToClassPath(new Path("/jars/lucene-4.5/lucene-queryparser-4.5.0.jar"), job);

//not work

// job.addResource(new Path("/jars/lucene-4.5/lucene-analyzers-common-4.5.0.jar"));

// job.addResource(new Path("/jars/lucene-4.5/lucene-core-4.5.0.jar"));

// job.addResource(new Path("/jars/lucene-4.5/lucene-queries-4.5.0.jar"));

// job.addResource(new Path("/jars/lucene-4.5/lucene-queryparser-4.5.0.jar"));

// job.setJar("/jars/lucene-4.5/lucene-analyzers-common-4.5.0.jar");

// job.setJar("/jars/lucene-4.5/lucene-core-4.5.0.jar");

// job.setJar("/jars/lucene-4.5/lucene-queries-4.5.0.jar");

// job.setJar("/jars/lucene-4.5/lucene-queryparser-4.5.0.jar");

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(HDFSDocument.class);

job.setMapperClass(MapHdfsDocument.class);

job.setReducerClass(ReduceLuceneIndex.class);

job.setInputFormat(TextInputFormat.class);

job.setOutputFormat(HDFSDocumentOutputFormat.class);

FileInputFormat.setInputPaths(job, new Path(ars[0]));

FileOutputFormat.setOutputPath(job, new Path(ars[1]));

JobClient.runJob(job);

}

}

View Code

3)、HDFS上传文件

$ hadoop dfs -mkdir /input

$ hadoop dfs -put [本地文件] /input/2013_10_21_00_00-2013_10_22_00_00.csv

--同上在HDFS上创建/jars目录上传Lucene的4个jar包,用于程序中,Mapred程序通过HDFS加载jar包

---运行程序

$ hadoop jar testlucene.jar com.yu.mapred.lib.TestLucene

4)、注意: 该项目主要是测试Hadoop Mapreduce + Lucene,所以文件的Map方法就是随便一写。用户可以根据对应的需求创建对应map方法。

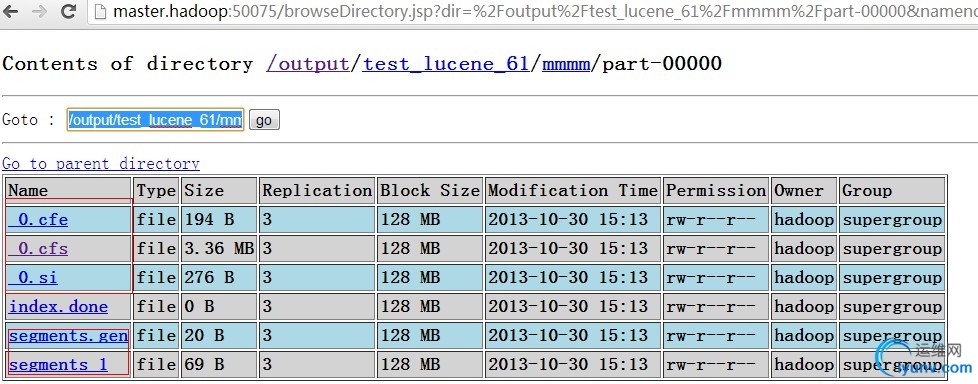

生成后可以通过 WEB访问HDFS

其中index.done文件只是为了标记HDFS上的Index文件已经创建完成。 |

|

|