|

|

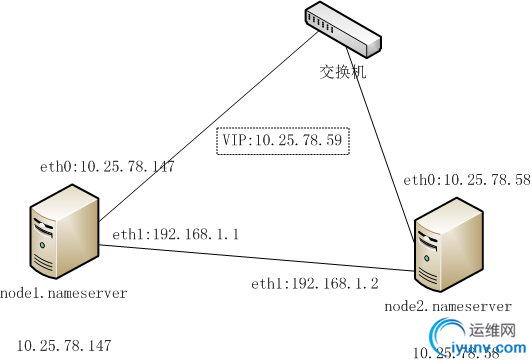

在集群中,部署主辅NameServer,即Master和Slave。当主NameServer(Master)出现故障时,例如,NameServer Master所在的物理服务器宕机,利用vip切换至辅NameServer(Slave)以继续对外提供服务。除了NameServer所在的服务器硬件宕机,当NameServer服务异常时,VIP也需要自动切换至Slave。

自动切换机制采用HeartBeat + Pacemaker的方式。

以下部署步骤的操作系统环境为:

- CentOS release 5.9 (Final)

- Linux 2.6.18-348.el5

- x86_64

部署图如下:

参数设置:

| 序号 | IP地址 | hostname | 其他部署组件 | | 1 | 10.25.78.58 | node2.nameserver | DataServer | | 2 | 10.25.78.147 | node1.nameserver | 应用、DataServer |

具体步骤如下:

- 安装组件。在NameServer Master和NameServer Slave上分别安装HeartBeat和Pacemaker,前者提供多个节点间通讯的基础设施,后者Pacemaker提供集群资源管理的功能。

#下载Pacemaker仓库:

wget -O /etc/yum.repos.d/pacemaker.repo http://clusterlabs.org/rpm/epel-5/clusterlabs.repo

#下载和安装EPEL仓库:

wget http://dl.fedoraproject.org/pub/epel/5/x86_64/epel-release-5-4.noarch.rpm

rpm -Uvh epel-release-5-4.noarch.rpm

#安装Heartbeat和Pacemaker软件:

yum install pacemaker.x86_64 -y

- 配置NameServer Master和NameServer Slave。假设TFS的安装目录为:/usr/local/tfs2,工作目录为:/data/tfs,分别修改两台机器上的NameServer配置文件/usr/local/tfs2/conf/ns.conf,主要是ip_addr和ip_addr_list两个配置项,已用红色标出(下文类似),部分配置如下:

[public]

#log file size default 1GB

log_size=168435456

#log file num default 64

log_num = 8

#log file level default debug

log_level = error

#main queue size default 10240

task_max_queue_size = 10240

#listen port

port = 10000

#work directoy

work_dir = /data/tfs

#device name

dev_name= eth0

#work thread count default 4

thread_count = 4

#ip addr(vip)

ip_addr = 10.25.78.59

[nameserver]

safe_mode_time = 10

ip_addr_list = 10.25.78.58|10.25.78.147

group_mask = 255.255.255.255

#

block_max_size = 75497472

#

max_replication = 1

#

min_replication = 1

# use capacity ratio

use_capacity_ratio = 98

# block use ratio

block_max_use_ratio = 98

#heart interval time(seconds)

heart_interval = 2

# cluster id defalut 1

cluster_id = 1

# block lost, replicate ratio

replicate_ratio_ = 50

...

- 配置Heartbeat集群。进入目录/usr/local/tfs2/scripts/ha,以下为文件目录详情

authkeys authkeys.tmp deploy ha.cf NameServer nsdep ns.xml RootServer rsdep rs.xml

编辑文件/usr/local/tfs2/scripts/ha/ha.cf,设置网卡eth1为心跳接口,为集群添加主机node1.nameserver和node2.nameserver。

debugfile /var/log/ha-debug

debug 1

keepalive 2

warntime 5

deadtime 10

initdead 30

auto_failback off

autojoin none

#bcast eth2

bcast eth1

#bcast eth3

#mcast bond0 225.0.0.5 694 1 0

udpport 694

node node1.nameserver

node node2.nameserver

compression bz2

logfile /var/log/ha-log

logfacility local0

crm respawn

配置节点之间的认证信息authkeys,执行deploy脚本即可。自动产生以下配置

auth 1

1 sha1 033949d7f2d97d0dd0e8e8c9ba20efa0

一个节点配置好之后,将新生成的文件/etc/ha.d/ha.cf、/etc/ha.d/authkeys拷贝到另一个节点的/etc/ha.d目录,以保证集群配置的一致性。

- 配置NameServer资源项。编辑文件/usr/local/tfs2/scripts/ha/ns.xml,其中,资源组为“ns-group”,包含两个项目“ip-alias”和“tfs-name-server”,类型为ocf,均采用heartbeat,对应的监控脚本分别为“IPaddr2”和“NameServer”。在虚拟IP的配置中,设置虚拟IP地址为10.25.78.59,设置虚拟网络接口卡NIC为eth0:0。在TFS NameServer的配置中,设置TFS的安装位置/usr/local/tfs2,设置IP为localhost,设置端口为10000,端口必须一致,设置用户为root。其他保持默认配置。

<resources>

<group id="ns-group">

<primitive class="ocf" id="ip-alias" provider="heartbeat" type="IPaddr2">

<instance_attributes id="ip-alias-instance_attributes">

<nvpair id="ip-alias-instance_attributes-ip" name="ip" value="10.25.78.59"/>

<nvpair id="ip-alias-instance_attributes-nic" name="nic" value="eth0:0"/>

</instance_attributes>

<operations>

<op id="ip-alias-monitor-2s" interval="2s" name="monitor"/>

</operations>

<meta_attributes id="ip-alias-meta_attributes">

<nvpair id="ip-alias-meta_attributes-target-role" name="target-role" value="Started"/>

</meta_attributes>

</primitive>

<primitive class="ocf" id="tfs-name-server" provider="heartbeat" type="NameServer">

<instance_attributes id="tfs-name-server-instance_attributes">

<nvpair id="tfs-name-server-instance_attributes-basedir" name="basedir" value="/usr/local/tfs2"/>

<nvpair id="tfs-name-server-instance_attributes-nsip" name="nsip" value="localhost"/>

<nvpair id="tfs-name-server-instance_attributes-nsport" name="nsport" value="10000"/>

<nvpair id="tfs-name-server-instance_attributes-user" name="user" value="root"/>

</instance_attributes>

<operations>

<op id="tfs-name-nameserver-monitor-2s" interval="10s" name="monitor"/>

<op id="tfs-name-nameserver-start" interval="0s" name="start" timeout="30s"/>

<op id="tfs-name-nameserver-stop" interval="0s" name="stop" timeout="30s"/>

</operations>

<meta_attributes id="tfs-name-server-meta_attributes">

<nvpair id="tfs-name-server-meta_attributes-target-role" name="target-role" value="Started"/>

<nvpair id="tfs-name-server-meta_attributes-resource-stickiness" name="resource-stickiness" value="INFINITY"/>

<nvpair id="tfs-name-server-meta_attributes-resource-failure-stickiness" name="resource-failure-stickiness" value="-INFINITY"/>

</meta_attributes>

</primitive>

</group>

</resources>

(可选):本测试中,由于设置了TFS的工作目录为/data/tfs,因此,需修改/usr/local/tfs2/scripts/ha/NameServer的配置,将pidfile的默认配置改为/data/tfs/logs/nameserver.pid,因为Heartbeat对TFS NameServer的监控需要依赖nameserver.pid文件。

# FIXME: Attributes special meaning to the resource id

process="$OCF_RESOURCE_INSTANCE"

binfile="$OCF_RESKEY_basedir/bin/nameserver"

cmdline_options="-f $OCF_RESKEY_basedir/conf/ns.conf -d"

pidfile="$OCF_RESKEY_pidfile"

[ -z "$pidfile" ] && pidfile="/data/tfs/logs/nameserver.pid"

user="$OCF_RESKEY_user"

[ -z "$user" ] && user=admin

或者,修改文件/usr/local/tfs2/scripts/ha/ns.xml(推荐该方法),部分配置如下:

<primitive class="ocf" id="tfs-name-server" provider="heartbeat" type="NameServer">

<instance_attributes id="tfs-name-server-instance_attributes">

<nvpair id="tfs-name-server-instance_attributes-basedir" name="basedir" value="/usr/local/tfs2"/>

<nvpair id="tfs-name-server-instance_attributes-nsip" name="nsip" value="localhost"/>

<nvpair id="tfs-name-server-instance_attributes-nsport" name="nsport" value="10000"/>

<nvpair id="tfs-name-server-instance_attributes-user" name="user" value="root"/>

<nvpair id="tfs-name-server-instance_attributes-pidfile" name="pidfile" value="/data/tfs/logs/nameserver.pid">

</instance_attributes>

<operations>

<op id="tfs-name-nameserver-monitor-2s" interval="10s" name="monitor"/>

<op id="tfs-name-nameserver-start" interval="0s" name="start" timeout="30s"/>

<op id="tfs-name-nameserver-stop" interval="0s" name="stop" timeout="30s"/>

</operations>

<meta_attributes id="tfs-name-server-meta_attributes">

<nvpair id="tfs-name-server-meta_attributes-target-role" name="target-role" value="Started"/>

<nvpair id="tfs-name-server-meta_attributes-resource-stickiness" name="resource-stickiness" value="INFINITY"/>

<nvpair id="tfs-name-server-meta_attributes-resource-failure-stickiness" name="resource-failure-stickiness" value="-INFINITY"/>

</meta_attributes>

</primitive>

修改完毕,运行资源部署脚本nsdep,该脚本会把NameServer脚本拷贝至/usr/lib/ocf/resource.d/heartbeat/目录,并添加执行权限。另一节点根据以上步骤做同样的操作。

- 启动NameServer Master和Slave。在两台机器上并分别启动HeartBeat服务。

service heartbeat start

为HeartBeat实例添加监控资源。

crm_attribute --type crm_config --attr-name symmetric-cluster --attr-value true

crm_attribute --type crm_config --attr-name stonith-enabled --attr-value false

crm_attribute --type rsc_defaults --name resource-stickiness --update 100

cibadmin --replace --obj_type=resources --xml-file /usr/local/tfs2/scripts/ha/ns.xml

- 查看状态。输入命令crm status查看集群的状态。当前,集群中有2个节点,分别为node1.nameserver和node2.nameserver。其中,DC为node1.nameserver(10.25.78.147),也就是TFS NameServer的Master,node2.nameserver(10.25.78.58)为TFS NameServer的Slave。有1个资源组,其中,含有针对整机配置的VIP和针对服务配置的TFS NameServer。

[iyunv@node1 ha]# crm status

============

Last updated: Wed Oct 1 11:14:33 2014

Stack: Heartbeat

Current DC: node1.nameserver (37feea9a-79d6-440c-a79e-5fa4e9fa0fc1) - partition with quorum

Version: 1.0.12-unknown

3 Nodes configured, 2 expected votes

1 Resources configured.

============

Online: [ node1.nameserver node2.nameserver ]

OFFLINE: [ localhost.localdomain ]

Resource Group: ns-group

ip-alias (ocf::heartbeat:IPaddr2): Started node1.nameserver

tfs-name-server (ocf::heartbeat:NameServer): Started node1.nameserver

- 进一步查看node1.nameserver和node2.nameserver的IP网络信息。node1.nameserver:

[iyunv@node1 ha]# ifconfig

eth0 Link encap:Ethernet HWaddr 00:0C:29:69:D8:3B

inet addr:10.25.78.147 Bcast:10.25.78.255 Mask:255.255.255.0

inet6 addr: fe80::20c:29ff:fe69:d83b/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:12168332 errors:0 dropped:0 overruns:0 frame:0

TX packets:11669695 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:1008119663 (961.4 MiB) TX bytes:861347436 (821.4 MiB)

eth0:0 Link encap:Ethernet HWaddr 00:0C:29:69:D8:3B

inet addr:10.25.78.59 Bcast:10.25.78.187 Mask:255.255.255.255

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

eth1 Link encap:Ethernet HWaddr 00:50:56:8E:02:C7

inet addr:192.168.1.1 Bcast:192.168.1.255 Mask:255.255.255.0

inet6 addr: fe80::250:56ff:fe8e:2c7/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:313621 errors:0 dropped:0 overruns:0 frame:0

TX packets:106593 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:47507802 (45.3 MiB) TX bytes:28948271 (27.6 MiB)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:677606 errors:0 dropped:0 overruns:0 frame:0

TX packets:677606 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:759646489 (724.4 MiB) TX bytes:759646489 (724.4 MiB)

node2.nameserver:

[iyunv@node2 ~]# ifconfig

eth0 Link encap:Ethernet HWaddr 00:50:56:8E:5A:93

inet addr:10.25.78.58 Bcast:10.25.78.255 Mask:255.255.255.0

inet6 addr: fe80::250:56ff:fe8e:5a93/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:7878136 errors:0 dropped:0 overruns:0 frame:0

TX packets:7757507 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:628855932 (599.7 MiB) TX bytes:433956252 (413.8 MiB)

eth1 Link encap:Ethernet HWaddr 00:50:56:8E:40:D2

inet addr:192.168.1.2 Bcast:192.168.1.255 Mask:255.255.255.0

inet6 addr: fe80::250:56ff:fe8e:40d2/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:444993 errors:0 dropped:0 overruns:0 frame:0

TX packets:93195 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:54691894 (52.1 MiB) TX bytes:25104031 (23.9 MiB)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:536045 errors:0 dropped:0 overruns:0 frame:0

TX packets:536045 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:27168836 (25.9 MiB) TX bytes:27168836 (25.9 MiB)

至此,已完成TFS NameServer高可用性的基本配置。 |

|

|

|

|

|

|

|

|