第1章 CEPH部署

1.1 简单介绍

Ceph的部署模式下主要包含以下几个类型的节点

Ø CephOSDs: A Ceph OSD 进程主要用来存储数据,处理数据的replication,恢复,填充,调整资源组合以及通过检查其他OSD进程的心跳信息提供一些监控信息给CephMonitors . 当Ceph Storage Cluster 要准备2份数据备份时,要求至少有2个CephOSD进程的状态是active+clean状态 (Ceph 默认会提供两份数据备份).

Ø Monitors:Ceph Monitor 维护了集群map的状态,主要包括monitor map, OSD map, PlacementGroup (PG) map, 以及CRUSHmap. Ceph 维护了 Ceph Monitors, Ceph OSD Daemons, 以及PGs状态变化的历史记录 (called an “epoch”).

Ø MDSs:Ceph Metadata Server (MDS)存储的元数据代表Ceph的文件系统 (i.e., Ceph Block Devices 以及Ceph ObjectStorage不适用 MDS). Ceph Metadata Servers 让系统用户可以执行一些POSIX文件系统的基本命令,例如ls,find 等.

1.2 集群规划

在创建集群前,首先做好集群规划,如下:

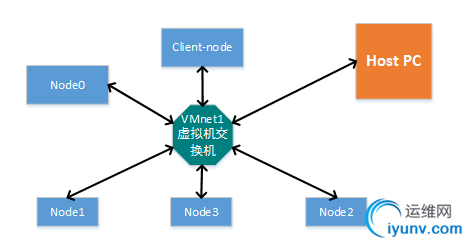

1.2.1 网络拓扑

基于VMware虚拟机部署ceph集群:

1.2.2 节点IP分配

节点IP

| Hostname

| 说明

| 192.168.92.100

| node0

| Admin, osd

| 192.168.92.101

| node1

| Osd,mon

| 192.168.92.102

| node2

| Osd,mon

| 192.168.92.103

| node3

| Osd,mon

| 192.168.92.109

| client-node

| 用户端节点;客服端,主要利用它挂载ceph集群提供的存储进行测试

|

1.2.3 Osd规划

Node2/var/local/osd0

Node3/var/local/osd0

1.3 主机准备

1.3.1 管理节点修改hosts

修改/etc/hosts

[ceph@admin-node my-cluster]$ sudocat /etc/hosts

[sudo] password for ceph:

127.0.0.1 localhost localhost.localdomain localhost4localhost4.localdomain4

::1 localhost localhost.localdomainlocalhost6 localhost6.localdomain6

192.168.92.100 node0

192.168.92.101 node1

192.168.92.102 node2

192.168.92.103 node3

[ceph@admin-node my-cluster]$

1.3.2 root权限准备

分别为上述5台主机存储创建用户ceph:(使用root权限,或者具有root权限)

创建用户

sudo adduser -d /home/ceph -m ceph

设置密码

sudo passwd ceph

设置账户权限

echo “ceph ALL = (root)NOPASSWD:ALL” | sudo tee /etc/sudoers.d/ceph

sudo chomod 0440 /etc/sudoers.d/ceph

1.3.3 sudo权限准备

执行命令visudo修改suoders文件:

把Defaults requiretty 这一行修改为修改 Defaults:ceph !requiretty

如果不进行修改ceph-depoy利用ssh执行命令将会出错

如果在ceph-deploy new <node-hostname>阶段依旧出错:

[ceph@admin-node my-cluster]$ceph-deploy new node3

[ceph_deploy.conf][DEBUG ] foundconfiguration file at: /home/ceph/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (1.5.28): /usr/bin/ceph-deploy newnode3

[ceph_deploy.cli][INFO ] ceph-deploy options:

[ceph_deploy.cli][INFO ] username :None

[ceph_deploy.cli][INFO ] func : <function new at 0xee0b18>

[ceph_deploy.cli][INFO ] verbose :False

[ceph_deploy.cli][INFO ] overwrite_conf :False

[ceph_deploy.cli][INFO ] quiet :False

[ceph_deploy.cli][INFO ] cd_conf :<ceph_deploy.conf.cephdeploy.Conf instance at 0xef9a28>

[ceph_deploy.cli][INFO ] cluster :ceph

[ceph_deploy.cli][INFO ] ssh_copykey :True

[ceph_deploy.cli][INFO ] mon : ['node3']

[ceph_deploy.cli][INFO ] public_network :None

[ceph_deploy.cli][INFO ] ceph_conf :None

[ceph_deploy.cli][INFO ] cluster_network :None

[ceph_deploy.cli][INFO ] default_release :False

[ceph_deploy.cli][INFO ] fsid :None

[ceph_deploy.new][DEBUG ] Creatingnew cluster named ceph

[ceph_deploy.new][INFO ] making sure passwordless SSH succeeds

[node3][DEBUG ] connected to host:admin-node

[node3][INFO ] Running command: ssh -CT -o BatchMode=yesnode3

[node3][DEBUG ] connection detectedneed for sudo

[node3][DEBUG ] connected to host:node3

[ceph_deploy][ERROR ] RuntimeError: remote connection got closed,ensure ``requiretty`` is disabled for node3

[ceph@admin-node my-cluster]$

则需要按照如下方式设置sudo无密码操作:

使用命令sudo visudo修改:

[ceph@node1 ~]$ sudo grep"ceph" /etc/sudoers

Defaults:ceph !requiretty

ceph ALL=(ALL) NOPASSWD: ALL

[ceph@node1 ~]$

另外:

1.注释Defaults requiretty

Defaultsrequiretty修改为 #Defaults requiretty, 表示不需要控制终端。

否则会出现sudo: sorry, you must have a tty to run sudo

2.增加行 Defaults visiblepw

否则会出现 sudo: no tty present and no askpass program specified

1.3.4 管理节点的无密码远程访问权限

配置admin-node与其他节点ssh无密码root权限访问其它节点。

第一步:在admin-node主机上执行命令:

ssh-keygen

说明:(为了简单点命令执行时直接确定即可)

第二步:将第一步的key复制至其他节点

ssh-copy-id ceph@node0

ssh-copy-id ceph@node1

ssh-copy-id ceph@node2

ssh-copy-id ceph@node3

同时修改~/.ssh/config文件增加一下内容:

ceph@admin-node my-cluster]$ cat~/.ssh/config

Host node1

Hostname node1

User ceph

Host node2

Hostname node2

User ceph

Host node3

Hostname node3

User ceph

Host client-node

Hostname client-node

User ceph

[ceph@admin-node my-cluster]$

1.3.4.1Badowner or permissions on .ssh/config的解决

错误信息:

Bad owner or permissions on/home/ceph/.ssh/config fatal: The remote end hung up unexpectedly

解决方案:

$sudo chmod 600 config

1.4 管理节点安装ceph-deploy工具

第一步:增加 yum配置文件

sudo vim /etc/yum.repos.d/ceph.repo

添加以下内容:

[ceph-noarch]

name=Ceph noarch packages

baseurl=http://mirrors.163.com/ceph/rpm-jewel/el7/noarch

enabled=1

gpgcheck=1

type=rpm-md

gpgkey=http://mirrors.163.com/ceph/keys/release.asc

第二步:更新软件源并按照ceph-deploy,时间同步软件

sudo yum update && sudo yuminstall ceph-deploy

sudo yum install ntp ntpupdatentp-doc

第三步:关闭所有节点的防火墙以及安全选项(在所有节点上执行)以及其他一些步骤

sudo systemctl stopfirewall.service

sudo setenforce 0

sudo yum installyum-plugin-priorities

总结:经过以上步骤前提条件都准备好了接下来真正部署ceph了。

1.5 创建Ceph集群

以前面创建的ceph用户在admin-node节点上创建目录

mkdir my-cluster

cd my-cluster

1.5.1 如何清空ceph数据

先清空之前所有的ceph数据,如果是新装不用执行此步骤,如果是重新部署的话也执行下面的命令:

ceph-deploy purgedata {ceph-node}[{ceph-node}]

ceph-deploy forgetkeys

如:

[ceph@node0my-cluster]$ ceph-deploy purgedata admin-node node1 node2 node3

[ceph_deploy.conf][DEBUG ] foundconfiguration file at: /home/ceph/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (1.5.28): /usr/bin/ceph-deploypurgedata admin-node node1 node2 node3

…

[node3][INFO ] Running command: sudo rm -rf--one-file-system -- /var/lib/ceph

[node3][INFO ] Running command: sudo rm -rf --one-file-system-- /etc/ceph/

[ceph@node0 cluster]$ ceph-deploy forgetkeys

[ceph_deploy.conf][DEBUG ] foundconfiguration file at: /home/ceph/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (1.5.28): /usr/bin/ceph-deployforgetkeys

…

[ceph_deploy.cli][INFO ] default_release : False

[ceph@admin-node my-cluster]$

命令:

ceph-deploy purge {ceph-node}[{ceph-node}]

如:

[iyunv@ceph-deploy ceph]$ ceph-deploypurge admin-node node1 node2 node3

1.5.2 创建集群设置Monitor节点

在admin节点上用ceph-deploy创建集群,new后面跟的是monitor节点的hostname,如果有多个monitor,则它们的hostname以为间隔,多个mon节点可以实现互备。

[ceph@node0 cluster]$ sudo vim/etc/ssh/sshd_config

[ceph@node0 cluster]$ sudo visudo

[ceph@node0 cluster]$ ceph-deploynew node1 node2 node3

[ceph_deploy.conf][DEBUG ] foundconfiguration file at: /home/ceph/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (1.5.34): /usr/bin/ceph-deploy newnode1 node2 node3

[ceph_deploy.cli][INFO ] ceph-deploy options:

[ceph_deploy.cli][INFO ] username :None

[ceph_deploy.cli][INFO ] func : <function new at0x29f2b18>

[ceph_deploy.cli][INFO ] verbose :False

[ceph_deploy.cli][INFO ] overwrite_conf :False

[ceph_deploy.cli][INFO ] quiet :False

[ceph_deploy.cli][INFO ] cd_conf :<ceph_deploy.conf.cephdeploy.Conf instance at 0x2a15a70>

[ceph_deploy.cli][INFO ] cluster :ceph

[ceph_deploy.cli][INFO ] ssh_copykey :True

[ceph_deploy.cli][INFO ] mon : ['node1', 'node2','node3']

[ceph_deploy.cli][INFO ] public_network :None

[ceph_deploy.cli][INFO ] ceph_conf :None

[ceph_deploy.cli][INFO ] cluster_network :None

[ceph_deploy.cli][INFO ] default_release :False

[ceph_deploy.cli][INFO ] fsid :None

[ceph_deploy.new][DEBUG ] Creatingnew cluster named ceph

[ceph_deploy.new][INFO ] making sure passwordless SSH succeeds

[node1][DEBUG ] connected to host:node0

[node1][INFO ] Running command: ssh -CT -o BatchMode=yesnode1

[node1][DEBUG ] connection detectedneed for sudo

[node1][DEBUG ] connected to host:node1

[node1][DEBUG ] detect platforminformation from remote host

[node1][DEBUG ] detect machine type

[node1][DEBUG ] find the location ofan executable

[node1][INFO ] Running command: sudo /usr/sbin/ip linkshow

[node1][INFO ] Running command: sudo /usr/sbin/ip addrshow

[node1][DEBUG ] IP addresses found:['192.168.92.101', '192.168.1.102', '192.168.122.1']

[ceph_deploy.new][DEBUG ] Resolvinghost node1

[ceph_deploy.new][DEBUG ] Monitornode1 at 192.168.92.101

[ceph_deploy.new][INFO ] making sure passwordless SSH succeeds

[node2][DEBUG ] connected to host:node0

[node2][INFO ] Running command: ssh -CT -o BatchMode=yesnode2

[node2][DEBUG ] connection detectedneed for sudo

[node2][DEBUG ] connected to host:node2

[node2][DEBUG ] detect platforminformation from remote host

[node2][DEBUG ] detect machine type

[node2][DEBUG ] find the location ofan executable

[node2][INFO ] Running command: sudo /usr/sbin/ip linkshow

[node2][INFO ] Running command: sudo /usr/sbin/ip addrshow

[node2][DEBUG ] IP addresses found:['192.168.1.103', '192.168.122.1', '192.168.92.102']

[ceph_deploy.new][DEBUG ] Resolvinghost node2

[ceph_deploy.new][DEBUG ] Monitornode2 at 192.168.92.102

[ceph_deploy.new][INFO ] making sure passwordless SSH succeeds

[node3][DEBUG ] connected to host:node0

[node3][INFO ] Running command: ssh -CT -o BatchMode=yesnode3

[node3][DEBUG ] connection detectedneed for sudo

[node3][DEBUG ] connected to host:node3

[node3][DEBUG ] detect platforminformation from remote host

[node3][DEBUG ] detect machine type

[node3][DEBUG ] find the location ofan executable

[node3][INFO ] Running command: sudo /usr/sbin/ip linkshow

[node3][INFO ] Running command: sudo /usr/sbin/ip addrshow

[node3][DEBUG ] IP addresses found:['192.168.122.1', '192.168.1.104', '192.168.92.103']

[ceph_deploy.new][DEBUG ] Resolvinghost node3

[ceph_deploy.new][DEBUG ] Monitornode3 at 192.168.92.103

[ceph_deploy.new][DEBUG ] Monitorinitial members are ['node1', 'node2', 'node3']

[ceph_deploy.new][DEBUG ] Monitoraddrs are ['192.168.92.101', '192.168.92.102', '192.168.92.103']

[ceph_deploy.new][DEBUG ] Creating arandom mon key...

[ceph_deploy.new][DEBUG ] Writingmonitor keyring to ceph.mon.keyring...

[ceph_deploy.new][DEBUG ] Writinginitial config to ceph.conf...

[ceph@node0 cluster]$

查看生成的文件

ceph@admin-node my-cluster]$ ls

ceph.conf ceph.log ceph.mon.keyring

查看ceph的配置文件,Node1节点都变为了控制节点

[ceph@admin-node my-cluster]$ catceph.conf

[global]

fsid =3c9892d0-398b-4808-aa20-4dc622356bd0

mon_initial_members = node1, node2,node3

mon_host =192.168.92.111,192.168.92.112,192.168.92.113

auth_cluster_required = cephx

auth_service_required = cephx

auth_client_required = cephx

filestore_xattr_use_omap = true

[ceph@admin-node my-cluster]$

1.5.2.1修改副本数目

修改默认的副本数为2,即ceph.conf,使osd_pool_default_size的值为2。如果该行,则添加。

[ceph@admin-node my-cluster]$ grep"osd_pool_default_size" ./ceph.conf

osd_pool_default_size = 2

[ceph@admin-node my-cluster]$

1.5.2.2网络不唯一的处理

如果IP不唯一,即除ceph集群使用的网络外,还有其他的网络IP。

比如:

eno16777736:192.168.175.100

eno50332184:192.168.92.110

virbr0:192.168.122.1

那么就需要在ceph.conf配置文档[global]部分增加参数public network参数:

public_network ={ip-address}/{netmask}

如:

public_network = 192.168.92.0/6789

1.5.3 安装ceph

admin-node节点用ceph-deploy工具向各个节点安装ceph:

ceph-deploy install{ceph-node}[{ceph-node} ...]

如:

[ceph@node0 cluster]$ ceph-deployinstall node0 node1 node2 node3

[ceph@node0 cluster]$ ceph-deployinstall node0 node1 node2 node3

[ceph_deploy.conf][DEBUG ] foundconfiguration file at: /home/ceph/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (1.5.34): /usr/bin/ceph-deployinstall node0 node1 node2 node3

[ceph_deploy.cli][INFO ] ceph-deploy options:

[ceph_deploy.cli][INFO ] verbose :False

[ceph_deploy.cli][INFO ] testing :None

[ceph_deploy.cli][INFO ] cd_conf :<ceph_deploy.conf.cephdeploy.Conf instance at 0x2ae0560>

[ceph_deploy.cli][INFO ] cluster :ceph

[ceph_deploy.cli][INFO ] dev_commit :None

[ceph_deploy.cli][INFO ] install_mds :False

[ceph_deploy.cli][INFO ] stable : None

[ceph_deploy.cli][INFO ] default_release :False

[ceph_deploy.cli][INFO ] username :None

[ceph_deploy.cli][INFO ] adjust_repos :True

[ceph_deploy.cli][INFO ] func : <function install at 0x2a53668>

[ceph_deploy.cli][INFO ] install_all :False

[ceph_deploy.cli][INFO ] repo :False

[ceph_deploy.cli][INFO ] host :['node0', 'node1', 'node2', 'node3']

[ceph_deploy.cli][INFO ] install_rgw :False

[ceph_deploy.cli][INFO ] install_tests :False

[ceph_deploy.cli][INFO ] repo_url :None

[ceph_deploy.cli][INFO ] ceph_conf : None

[ceph_deploy.cli][INFO ] install_osd :False

[ceph_deploy.cli][INFO ] version_kind :stable

[ceph_deploy.cli][INFO ] install_common :False

[ceph_deploy.cli][INFO ] overwrite_conf : False

[ceph_deploy.cli][INFO ] quiet :False

[ceph_deploy.cli][INFO ] dev :master

[ceph_deploy.cli][INFO ] local_mirror :None

[ceph_deploy.cli][INFO ] release : None

[ceph_deploy.cli][INFO ] install_mon :False

[ceph_deploy.cli][INFO ] gpg_url :None

[ceph_deploy.install][DEBUG ]Installing stable version jewel on cluster ceph hosts node0 node1 node2 node3

[ceph_deploy.install][DEBUG ]Detecting platform for host node0 ...

[node0][DEBUG ] connection detectedneed for sudo

[node0][DEBUG ] connected to host:node0

[node0][DEBUG ] detect platforminformation from remote host

[node0][DEBUG ] detect machine type

[ceph_deploy.install][INFO ] Distro info: CentOS Linux 7.2.1511 Core

[node0][INFO ] installing Ceph on node0

[node0][INFO ] Running command: sudo yum clean all

[node0][DEBUG ] Loaded plugins:fastestmirror, langpacks, priorities

[node0][DEBUG ] Cleaning repos: CephCeph-noarch base ceph-source epel extras updates

[node0][DEBUG ] Cleaning upeverything

[node0][DEBUG ] Cleaning up list offastest mirrors

[node0][INFO ] Running command: sudo yum -y installepel-release

[node0][DEBUG ] Loaded plugins:fastestmirror, langpacks, priorities

[node0][DEBUG ] Determining fastestmirrors

[node0][DEBUG ] * epel: mirror01.idc.hinet.net

[node0][DEBUG ] 25 packages excludeddue to repository priority protections

[node0][DEBUG ] Packageepel-release-7-7.noarch already installed and latest version

[node0][DEBUG ] Nothing to do

[node0][INFO ] Running command: sudo yum -y installyum-plugin-priorities

[node0][DEBUG ] Loaded plugins:fastestmirror, langpacks, priorities

[node0][DEBUG ] Loading mirrorspeeds from cached hostfile

[node0][DEBUG ] * epel: mirror01.idc.hinet.net

[node0][DEBUG ] 25 packages excludeddue to repository priority protections

[node0][DEBUG ] Packageyum-plugin-priorities-1.1.31-34.el7.noarch already installed and latest version

[node0][DEBUG ] Nothing to do

[node0][DEBUG ] Configure Yumpriorities to include obsoletes

[node0][WARNIN] check_obsoletes hasbeen enabled for Yum priorities plugin

[node0][INFO ] Running command: sudo rpm --importhttps://download.ceph.com/keys/release.asc

[node0][INFO ] Running command: sudo rpm -Uvh--replacepkgshttps://download.ceph.com/rpm-jewel/el7/noarch/ceph-release-1-0.el7.noarch.rpm

[node0][DEBUG ] Retrievinghttps://download.ceph.com/rpm-jewel/el7/noarch/ceph-release-1-0.el7.noarch.rpm

[node0][DEBUG ] Preparing... ########################################

[node0][DEBUG ] Updating /installing...

[node0][DEBUG ]ceph-release-1-1.el7 ########################################

[node0][WARNIN] ensuring that/etc/yum.repos.d/ceph.repo contains a high priority

[node0][WARNIN] altered ceph.repopriorities to contain: priority=1

[node0][INFO ] Running command: sudo yum -y install cephceph-radosgw

[node0][DEBUG ] Loaded plugins:fastestmirror, langpacks, priorities

[node0][DEBUG ] Loading mirrorspeeds from cached hostfile

[node0][DEBUG ] * epel: mirror01.idc.hinet.net

[node0][DEBUG ] 25 packages excludeddue to repository priority protections

[node0][DEBUG ] Package1:ceph-10.2.2-0.el7.x86_64 already installed and latest version

[node0][DEBUG ] Package1:ceph-radosgw-10.2.2-0.el7.x86_64 already installed and latest version

[node0][DEBUG ] Nothing to do

[node0][INFO ] Running command: sudo ceph --version

[node0][DEBUG ] ceph version 10.2.2(45107e21c568dd033c2f0a3107dec8f0b0e58374)

….

1.5.3.1问题No section: 'ceph'

问题日志

[ceph@node0 cluster]$ ceph-deployinstall node0 node1 node2 node3

[ceph_deploy.conf][DEBUG ] foundconfiguration file at: /home/ceph/.cephdeploy.conf

…

[node1][WARNIN] ensuring that/etc/yum.repos.d/ceph.repo contains a high priority

[ceph_deploy][ERROR ] RuntimeError:NoSectionError: No section: 'ceph'

[ceph@node0 cluster]$

解决方法:

yum remove ceph-release

再次执行

[ceph@node0 cluster]$ ceph-deployinstall node0 node1 node2 node3

解决方案:

原因是在失败节点安装ceph超时,就需要单独执行,在失败的节点上执行如下语句

sudo yum -y install cephceph-radosgw

1.5.4 初始化monitor节点

初始化监控节点并收集keyring:

[ceph@node0 cluster]$ ceph-deploymon create-initial

[ceph@node0 cluster]$ ceph-deploymon create-initial

[ceph_deploy.conf][DEBUG ] foundconfiguration file at: /home/ceph/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (1.5.34): /usr/bin/ceph-deploy moncreate-initial

[ceph_deploy.cli][INFO ] ceph-deploy options:

[ceph_deploy.cli][INFO ] username :None

[ceph_deploy.cli][INFO ] verbose : False

[ceph_deploy.cli][INFO ] overwrite_conf :False

[ceph_deploy.cli][INFO ] subcommand :create-initial

[ceph_deploy.cli][INFO ] quiet :False

[ceph_deploy.cli][INFO ] cd_conf :<ceph_deploy.conf.cephdeploy.Conf instance at 0x7fbe46804cb0>

[ceph_deploy.cli][INFO ] cluster :ceph

[ceph_deploy.cli][INFO ] func :<function mon at 0x7fbe467f6aa0>

[ceph_deploy.cli][INFO ] ceph_conf :None

[ceph_deploy.cli][INFO ] default_release :False

[ceph_deploy.cli][INFO ] keyrings :None

[ceph_deploy.mon][DEBUG ] Deployingmon, cluster ceph hosts node1 node2 node3

[ceph_deploy.mon][DEBUG ] detectingplatform for host node1 ...

[node1][DEBUG ] connection detectedneed for sudo

[node1][DEBUG ] connected to host:node1

[node1][DEBUG ] detect platforminformation from remote host

[node1][DEBUG ] detect machine type

[node1][DEBUG ] find the location ofan executable

[ceph_deploy.mon][INFO ] distro info: CentOS Linux 7.2.1511 Core

[node1][DEBUG ] determining ifprovided host has same hostname in remote

[node1][DEBUG ] get remote shorthostname

[node1][DEBUG ] deploying mon to node1

[node1][DEBUG ] get remote shorthostname

[node1][DEBUG ] remote hostname:node1

[node1][DEBUG ] write clusterconfiguration to /etc/ceph/{cluster}.conf

[node1][DEBUG ] create the mon pathif it does not exist

[node1][DEBUG ] checking for donepath: /var/lib/ceph/mon/ceph-node1/done

[node1][DEBUG ] done path does notexist: /var/lib/ceph/mon/ceph-node1/done

[node1][INFO ] creating keyring file:/var/lib/ceph/tmp/ceph-node1.mon.keyring

[node1][DEBUG ] create the monitorkeyring file

[node1][INFO ] Running command: sudo ceph-mon --clusterceph --mkfs -i node1 --keyring /var/lib/ceph/tmp/ceph-node1.mon.keyring--setuser 1001 --setgroup 1001

[node1][DEBUG ] ceph-mon:mon.noname-a 192.168.92.101:6789/0 is local, renaming to mon.node1

[node1][DEBUG ] ceph-mon: set fsidto 4f8f6c46-9f67-4475-9cb5-52cafecb3e4c

[node1][DEBUG ] ceph-mon: createdmonfs at /var/lib/ceph/mon/ceph-node1 for mon.node1

[node1][INFO ] unlinking keyring file/var/lib/ceph/tmp/ceph-node1.mon.keyring

[node1][DEBUG ] create a done fileto avoid re-doing the mon deployment

[node1][DEBUG ] create the init pathif it does not exist

[node1][INFO ] Running command: sudo systemctl enableceph.target

[node1][INFO ] Running command: sudo systemctl enableceph-mon@node1

[node1][WARNIN] Created symlink from/etc/systemd/system/ceph-mon.target.wants/ceph-mon@node1.service to/usr/lib/systemd/system/ceph-mon@.service.

[node1][INFO ] Running command: sudo systemctl startceph-mon@node1

[node1][INFO ] Running command: sudo ceph --cluster=ceph--admin-daemon /var/run/ceph/ceph-mon.node1.asok mon_status

[node1][DEBUG ]********************************************************************************

[node1][DEBUG ] status for monitor:mon.node1

[node1][DEBUG ] {

[node1][DEBUG ] "election_epoch": 0,

[node1][DEBUG ] "extra_probe_peers": [

[node1][DEBUG ] "192.168.92.102:6789/0",

[node1][DEBUG ] "192.168.92.103:6789/0"

[node1][DEBUG ] ],

[node1][DEBUG ] "monmap": {

[node1][DEBUG ] "created": "2016-06-2414:43:29.944474",

[node1][DEBUG ] "epoch": 0,

[node1][DEBUG ] "fsid":"4f8f6c46-9f67-4475-9cb5-52cafecb3e4c",

[node1][DEBUG ] "modified": "2016-06-2414:43:29.944474",

[node1][DEBUG ] "mons": [

[node1][DEBUG ] {

[node1][DEBUG ] "addr":"192.168.92.101:6789/0",

[node1][DEBUG ] "name": "node1",

[node1][DEBUG ] "rank": 0

[node1][DEBUG ] },

[node1][DEBUG ] {

[node1][DEBUG ] "addr":"0.0.0.0:0/1",

[node1][DEBUG ] "name": "node2",

[node1][DEBUG ] "rank": 1

[node1][DEBUG ] },

[node1][DEBUG ] {

[node1][DEBUG ] "addr":"0.0.0.0:0/2",

[node1][DEBUG ] "name": "node3",

[node1][DEBUG ] "rank": 2

[node1][DEBUG ] }

[node1][DEBUG ] ]

[node1][DEBUG ] },

[node1][DEBUG ] "name": "node1",

[node1][DEBUG ] "outside_quorum": [

[node1][DEBUG ] "node1"

[node1][DEBUG ] ],

[node1][DEBUG ] "quorum": [],

[node1][DEBUG ] "rank": 0,

[node1][DEBUG ] "state": "probing",

[node1][DEBUG ] "sync_provider": []

[node1][DEBUG ] }

[node1][DEBUG ]********************************************************************************

[node1][INFO ] monitor: mon.node1 is running

[node1][INFO ] Running command: sudo ceph --cluster=ceph--admin-daemon /var/run/ceph/ceph-mon.node1.asok mon_status

[ceph_deploy.mon][DEBUG ] detectingplatform for host node2 ...

[node2][DEBUG ] connection detectedneed for sudo

[node2][DEBUG ] connected to host:node2

[node2][DEBUG ] detect platforminformation from remote host

[node2][DEBUG ] detect machine type

[node2][DEBUG ] find the location ofan executable

[ceph_deploy.mon][INFO ] distro info: CentOS Linux 7.2.1511 Core

[node2][DEBUG ] determining ifprovided host has same hostname in remote

[node2][DEBUG ] get remote shorthostname

[node2][DEBUG ] deploying mon tonode2

[node2][DEBUG ] get remote shorthostname

[node2][DEBUG ] remote hostname:node2

[node2][DEBUG ] write clusterconfiguration to /etc/ceph/{cluster}.conf

[node2][DEBUG ] create the mon pathif it does not exist

[node2][DEBUG ] checking for donepath: /var/lib/ceph/mon/ceph-node2/done

[node2][DEBUG ] done path does notexist: /var/lib/ceph/mon/ceph-node2/done

[node2][INFO ] creating keyring file:/var/lib/ceph/tmp/ceph-node2.mon.keyring

[node2][DEBUG ] create the monitorkeyring file

[node2][INFO ] Running command: sudo ceph-mon --clusterceph --mkfs -i node2 --keyring /var/lib/ceph/tmp/ceph-node2.mon.keyring--setuser 1001 --setgroup 1001

[node2][DEBUG ] ceph-mon:mon.noname-b 192.168.92.102:6789/0 is local, renaming to mon.node2

[node2][DEBUG ] ceph-mon: set fsidto 4f8f6c46-9f67-4475-9cb5-52cafecb3e4c

[node2][DEBUG ] ceph-mon: createdmonfs at /var/lib/ceph/mon/ceph-node2 for mon.node2

[node2][INFO ] unlinking keyring file/var/lib/ceph/tmp/ceph-node2.mon.keyring

[node2][DEBUG ] create a done fileto avoid re-doing the mon deployment

[node2][DEBUG ] create the init pathif it does not exist

[node2][INFO ] Running command: sudo systemctl enableceph.target

[node2][INFO ] Running command: sudo systemctl enableceph-mon@node2

[node2][WARNIN] Created symlink from/etc/systemd/system/ceph-mon.target.wants/ceph-mon@node2.service to/usr/lib/systemd/system/ceph-mon@.service.

[node2][INFO ] Running command: sudo systemctl startceph-mon@node2

[node2][INFO ] Running command: sudo ceph --cluster=ceph--admin-daemon /var/run/ceph/ceph-mon.node2.asok mon_status

[node2][DEBUG ]********************************************************************************

[node2][DEBUG ] status for monitor:mon.node2

[node2][DEBUG ] {

[node2][DEBUG ] "election_epoch": 1,

[node2][DEBUG ] "extra_probe_peers": [

[node2][DEBUG ] "192.168.92.101:6789/0",

[node2][DEBUG ] "192.168.92.103:6789/0"

[node2][DEBUG ] ],

[node2][DEBUG ] "monmap": {

[node2][DEBUG ] "created": "2016-06-2414:43:34.865908",

[node2][DEBUG ] "epoch": 0,

[node2][DEBUG ] "fsid":"4f8f6c46-9f67-4475-9cb5-52cafecb3e4c",

[node2][DEBUG ] "modified": "2016-06-2414:43:34.865908",

[node2][DEBUG ] "mons": [

[node2][DEBUG ] {

[node2][DEBUG ] "addr":"192.168.92.101:6789/0",

[node2][DEBUG ] "name": "node1",

[node2][DEBUG ] "rank": 0

[node2][DEBUG ] },

[node2][DEBUG ] {

[node2][DEBUG ] "addr":"192.168.92.102:6789/0",

[node2][DEBUG ] "name": "node2",

[node2][DEBUG ] "rank": 1

[node2][DEBUG ] },

[node2][DEBUG ] {

[node2][DEBUG ] "addr":"0.0.0.0:0/2",

[node2][DEBUG ] "name": "node3",

[node2][DEBUG ] "rank": 2

[node2][DEBUG ] }

[node2][DEBUG ] ]

[node2][DEBUG ] },

[node2][DEBUG ] "name": "node2",

[node2][DEBUG ] "outside_quorum": [],

[node2][DEBUG ] "quorum": [],

[node2][DEBUG ] "rank": 1,

[node2][DEBUG ] "state": "electing",

[node2][DEBUG ] "sync_provider": []

[node2][DEBUG ] }

[node2][DEBUG ]********************************************************************************

[node2][INFO ] monitor: mon.node2 is running

[node2][INFO ] Running command: sudo ceph --cluster=ceph--admin-daemon /var/run/ceph/ceph-mon.node2.asok mon_status

[ceph_deploy.mon][DEBUG ] detectingplatform for host node3 ...

[node3][DEBUG ] connection detectedneed for sudo

[node3][DEBUG ] connected to host:node3

[node3][DEBUG ] detect platforminformation from remote host

[node3][DEBUG ] detect machine type

[node3][DEBUG ] find the location ofan executable

[ceph_deploy.mon][INFO ] distro info: CentOS Linux 7.2.1511 Core

[node3][DEBUG ] determining ifprovided host has same hostname in remote

[node3][DEBUG ] get remote shorthostname

[node3][DEBUG ] deploying mon tonode3

[node3][DEBUG ] get remote shorthostname

[node3][DEBUG ] remote hostname:node3

[node3][DEBUG ] write clusterconfiguration to /etc/ceph/{cluster}.conf

[node3][DEBUG ] create the mon pathif it does not exist

[node3][DEBUG ] checking for donepath: /var/lib/ceph/mon/ceph-node3/done

[node3][DEBUG ] done path does notexist: /var/lib/ceph/mon/ceph-node3/done

[node3][INFO ] creating keyring file:/var/lib/ceph/tmp/ceph-node3.mon.keyring

[node3][DEBUG ] create the monitorkeyring file

[node3][INFO ] Running command: sudo ceph-mon --clusterceph --mkfs -i node3 --keyring /var/lib/ceph/tmp/ceph-node3.mon.keyring--setuser 1001 --setgroup 1001

[node3][DEBUG ] ceph-mon:mon.noname-c 192.168.92.103:6789/0 is local, renaming to mon.node3

[node3][DEBUG ] ceph-mon: set fsidto 4f8f6c46-9f67-4475-9cb5-52cafecb3e4c

[node3][DEBUG ] ceph-mon: createdmonfs at /var/lib/ceph/mon/ceph-node3 for mon.node3

[node3][INFO ] unlinking keyring file/var/lib/ceph/tmp/ceph-node3.mon.keyring

[node3][DEBUG ] create a done fileto avoid re-doing the mon deployment

[node3][DEBUG ] create the init pathif it does not exist

[node3][INFO ] Running command: sudo systemctl enableceph.target

[node3][INFO ] Running command: sudo systemctl enableceph-mon@node3

[node3][WARNIN] Created symlink from/etc/systemd/system/ceph-mon.target.wants/ceph-mon@node3.service to/usr/lib/systemd/system/ceph-mon@.service.

[node3][INFO ] Running command: sudo systemctl startceph-mon@node3

[node3][INFO ] Running command: sudo ceph --cluster=ceph--admin-daemon /var/run/ceph/ceph-mon.node3.asok mon_status

[node3][DEBUG ]********************************************************************************

[node3][DEBUG ] status for monitor:mon.node3

[node3][DEBUG ] {

[node3][DEBUG ] "election_epoch": 1,

[node3][DEBUG ] "extra_probe_peers": [

[node3][DEBUG ] "192.168.92.101:6789/0",

[node3][DEBUG ] "192.168.92.102:6789/0"

[node3][DEBUG ] ],

[node3][DEBUG ] "monmap": {

[node3][DEBUG ] "created": "2016-06-2414:43:39.800046",

[node3][DEBUG ] "epoch": 0,

[node3][DEBUG ] "fsid":"4f8f6c46-9f67-4475-9cb5-52cafecb3e4c",

[node3][DEBUG ] "modified": "2016-06-2414:43:39.800046",

[node3][DEBUG ] "mons": [

[node3][DEBUG ] {

[node3][DEBUG ] "addr":"192.168.92.101:6789/0",

[node3][DEBUG ] "name": "node1",

[node3][DEBUG ] "rank": 0

[node3][DEBUG ] },

[node3][DEBUG ] {

[node3][DEBUG ] "addr":"192.168.92.102:6789/0",

[node3][DEBUG ] "name": "node2",

[node3][DEBUG ] "rank": 1

[node3][DEBUG ] },

[node3][DEBUG ] {

[node3][DEBUG ] "addr":"192.168.92.103:6789/0",

[node3][DEBUG ] "name": "node3",

[node3][DEBUG ] "rank": 2

[node3][DEBUG ] }

[node3][DEBUG ] ]

[node3][DEBUG ] },

[node3][DEBUG ] "name": "node3",

[node3][DEBUG ] "outside_quorum": [],

[node3][DEBUG ] "quorum": [],

[node3][DEBUG ] "rank": 2,

[node3][DEBUG ] "state": "electing",

[node3][DEBUG ] "sync_provider": []

[node3][DEBUG ] }

[node3][DEBUG ]********************************************************************************

[node3][INFO ] monitor: mon.node3 is running

[node3][INFO ] Running command: sudo ceph --cluster=ceph--admin-daemon /var/run/ceph/ceph-mon.node3.asok mon_status

[ceph_deploy.mon][INFO ] processing monitor mon.node1

[node1][DEBUG ] connection detectedneed for sudo

[node1][DEBUG ] connected to host:node1

[node1][DEBUG ] detect platforminformation from remote host

[node1][DEBUG ] detect machine type

[node1][DEBUG ] find the location ofan executable

[node1][INFO ] Running command: sudo ceph --cluster=ceph--admin-daemon /var/run/ceph/ceph-mon.node1.asok mon_status

[ceph_deploy.mon][WARNIN] mon.node1monitor is not yet in quorum, tries left: 5

[ceph_deploy.mon][WARNIN] waiting 5seconds before retrying

[node1][INFO ] Running command: sudo ceph --cluster=ceph--admin-daemon /var/run/ceph/ceph-mon.node1.asok mon_status

[ceph_deploy.mon][WARNIN] mon.node1monitor is not yet in quorum, tries left: 4

[ceph_deploy.mon][WARNIN] waiting 10seconds before retrying

[node1][INFO ] Running command: sudo ceph --cluster=ceph--admin-daemon /var/run/ceph/ceph-mon.node1.asok mon_status

[ceph_deploy.mon][INFO ] mon.node1 monitor has reached quorum!

[ceph_deploy.mon][INFO ] processing monitor mon.node2

[node2][DEBUG ] connection detectedneed for sudo

[node2][DEBUG ] connected to host:node2

[node2][DEBUG ] detect platforminformation from remote host

[node2][DEBUG ] detect machine type

[node2][DEBUG ] find the location ofan executable

[node2][INFO ] Running command: sudo ceph --cluster=ceph--admin-daemon /var/run/ceph/ceph-mon.node2.asok mon_status

[ceph_deploy.mon][INFO ] mon.node2 monitor has reached quorum!

[ceph_deploy.mon][INFO ] processing monitor mon.node3

[node3][DEBUG ] connection detectedneed for sudo

[node3][DEBUG ] connected to host:node3

[node3][DEBUG ] detect platforminformation from remote host

[node3][DEBUG ] detect machine type

[node3][DEBUG ] find the location ofan executable

[node3][INFO ] Running command: sudo ceph --cluster=ceph--admin-daemon /var/run/ceph/ceph-mon.node3.asok mon_status

[ceph_deploy.mon][INFO ] mon.node3 monitor has reached quorum!

[ceph_deploy.mon][INFO ] all initial monitors are running and haveformed quorum

[ceph_deploy.mon][INFO ] Running gatherkeys...

[ceph_deploy.gatherkeys][INFO ] Storing keys in temp directory/tmp/tmp5_jcSr

[node1][DEBUG ] connection detectedneed for sudo

[node1][DEBUG ] connected to host:node1

[node1][DEBUG ] detect platforminformation from remote host

[node1][DEBUG ] detect machine type

[node1][DEBUG ] get remote shorthostname

[node1][DEBUG ] fetch remote file

[node1][INFO ] Running command: sudo /usr/bin/ceph--connect-timeout=25 --cluster=ceph--admin-daemon=/var/run/ceph/ceph-mon.node1.asok mon_status

[node1][INFO ] Running command: sudo /usr/bin/ceph--connect-timeout=25 --cluster=ceph --name mon.--keyring=/var/lib/ceph/mon/ceph-node1/keyring auth get-or-create client.adminosdallow * mds allow * mon allow *

[node1][INFO ] Running command: sudo /usr/bin/ceph--connect-timeout=25 --cluster=ceph --name mon.--keyring=/var/lib/ceph/mon/ceph-node1/keyring auth get-or-createclient.bootstrap-mdsmon allow profile bootstrap-mds

[node1][INFO ] Running command: sudo /usr/bin/ceph--connect-timeout=25 --cluster=ceph --name mon. --keyring=/var/lib/ceph/mon/ceph-node1/keyringauth get-or-create client.bootstrap-osdmon allow profile bootstrap-osd

[node1][INFO ] Running command: sudo /usr/bin/ceph--connect-timeout=25 --cluster=ceph --name mon.--keyring=/var/lib/ceph/mon/ceph-node1/keyring auth get-or-createclient.bootstrap-rgwmon allow profile bootstrap-rgw

[ceph_deploy.gatherkeys][INFO ] Storing ceph.client.admin.keyring

[ceph_deploy.gatherkeys][INFO ] Storing ceph.bootstrap-mds.keyring

[ceph_deploy.gatherkeys][INFO ] keyring 'ceph.mon.keyring' already exists

[ceph_deploy.gatherkeys][INFO ] Storing ceph.bootstrap-osd.keyring

[ceph_deploy.gatherkeys][INFO ] Storing ceph.bootstrap-rgw.keyring

[ceph_deploy.gatherkeys][INFO ] Destroy temp directory /tmp/tmp5_jcSr

[ceph@node0 cluster]$

查看生成的文件

[ceph@node0 cluster]$ ls

ceph.bootstrap-mds.keyring ceph.bootstrap-rgw.keyring ceph.conf ceph.mon.keyring

ceph.bootstrap-osd.keyring ceph.client.admin.keyring ceph-deploy-ceph.log

[ceph@node0 cluster]$

第2章 OSD操作

2.1 添加OSD

2.1.1 初始化OSD

命令

ceph-deploy osd prepare{ceph-node}:/path/to/directory

示例,如1.2.3所示

[ceph@node0 cluster]$ ceph-deployosd prepare node2:/var/local/osd0 node3:/var/local/osd0

[ceph_deploy.conf][DEBUG ] foundconfiguration file at: /home/ceph/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (1.5.34): /usr/bin/ceph-deploy osdprepare node2:/var/local/osd0 node3:/var/local/osd0

[ceph_deploy.cli][INFO ] ceph-deploy options:

[ceph_deploy.cli][INFO ] username :None

[ceph_deploy.cli][INFO ] disk : [('node2', '/var/local/osd0',None), ('node3', '/var/local/osd0', None)]

[ceph_deploy.cli][INFO ] dmcrypt :False

[ceph_deploy.cli][INFO ] verbose :False

[ceph_deploy.cli][INFO ] bluestore : None

[ceph_deploy.cli][INFO ] overwrite_conf :False

[ceph_deploy.cli][INFO ] subcommand :prepare

[ceph_deploy.cli][INFO ] dmcrypt_key_dir :/etc/ceph/dmcrypt-keys

[ceph_deploy.cli][INFO ] quiet :False

[ceph_deploy.cli][INFO ] cd_conf :<ceph_deploy.conf.cephdeploy.Conf instance at 0x12dddd0>

[ceph_deploy.cli][INFO ] cluster :ceph

[ceph_deploy.cli][INFO ] fs_type : xfs

[ceph_deploy.cli][INFO ] func :<function osd at 0x12d2398>

[ceph_deploy.cli][INFO ] ceph_conf :None

[ceph_deploy.cli][INFO ] default_release :False

[ceph_deploy.cli][INFO ] zap_disk :False

[ceph_deploy.osd][DEBUG ] Preparingcluster ceph disks node2:/var/local/osd0: node3:/var/local/osd0:

[node2][DEBUG ] connection detectedneed for sudo

[node2][DEBUG ] connected to host:node2

[node2][DEBUG ] detect platforminformation from remote host

[node2][DEBUG ] detect machine type

[node2][DEBUG ] find the location ofan executable

[ceph_deploy.osd][INFO ] Distro info: CentOS Linux 7.2.1511 Core

[ceph_deploy.osd][DEBUG ] Deployingosd to node2

[node2][DEBUG ] write clusterconfiguration to /etc/ceph/{cluster}.conf

[ceph_deploy.osd][DEBUG ] Preparinghost node2 disk /var/local/osd0 journal None activate False

[node2][DEBUG ] find the location ofan executable

[node2][INFO ] Running command: sudo /usr/sbin/ceph-disk-v prepare --cluster ceph --fs-type xfs -- /var/local/osd0

[node2][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --cluster=ceph --show-config-value=fsid

[node2][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --check-allows-journal -i 0 --cluster ceph

[node2][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --check-wants-journal -i 0 --cluster ceph

[node2][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --check-needs-journal -i 0 --cluster ceph

[node2][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --cluster=ceph --show-config-value=osd_journal_size

[node2][WARNIN] populate_data_path:Preparing osd data dir /var/local/osd0

[node2][WARNIN] command: Runningcommand: /sbin/restorecon -R /var/local/osd0/ceph_fsid.3504.tmp

[node2][WARNIN] command: Runningcommand: /usr/bin/chown -R ceph:ceph /var/local/osd0/ceph_fsid.3504.tmp

[node2][WARNIN] command: Runningcommand: /sbin/restorecon -R /var/local/osd0/fsid.3504.tmp

[node2][WARNIN] command: Runningcommand: /usr/bin/chown -R ceph:ceph /var/local/osd0/fsid.3504.tmp

[node2][WARNIN] command: Runningcommand: /sbin/restorecon -R /var/local/osd0/magic.3504.tmp

[node2][WARNIN] command: Runningcommand: /usr/bin/chown -R ceph:ceph /var/local/osd0/magic.3504.tmp

[node2][INFO ] checking OSD status...

[node2][DEBUG ] find the location ofan executable

[node2][INFO ] Running command: sudo /bin/ceph--cluster=ceph osd stat --format=json

[ceph_deploy.osd][DEBUG ] Host node2is now ready for osd use.

[node3][DEBUG ] connection detectedneed for sudo

[node3][DEBUG ] connected to host:node3

[node3][DEBUG ] detect platforminformation from remote host

[node3][DEBUG ] detect machine type

[node3][DEBUG ] find the location ofan executable

[ceph_deploy.osd][INFO ] Distro info: CentOS Linux 7.2.1511 Core

[ceph_deploy.osd][DEBUG ] Deployingosd to node3

[node3][DEBUG ] write clusterconfiguration to /etc/ceph/{cluster}.conf

[ceph_deploy.osd][DEBUG ] Preparinghost node3 disk /var/local/osd0 journal None activate False

[node3][DEBUG ] find the location ofan executable

[node3][INFO ] Running command: sudo /usr/sbin/ceph-disk-v prepare --cluster ceph --fs-type xfs -- /var/local/osd0

[node3][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --cluster=ceph --show-config-value=fsid

[node3][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --check-allows-journal -i 0 --cluster ceph

[node3][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --check-wants-journal -i 0 --cluster ceph

[node3][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --check-needs-journal -i 0 --cluster ceph

[node3][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --cluster=ceph --show-config-value=osd_journal_size

[node3][WARNIN] populate_data_path:Preparing osd data dir /var/local/osd0

[node3][WARNIN] command: Runningcommand: /sbin/restorecon -R /var/local/osd0/ceph_fsid.3553.tmp

[node3][WARNIN] command: Runningcommand: /usr/bin/chown -R ceph:ceph /var/local/osd0/ceph_fsid.3553.tmp

[node3][WARNIN] command: Runningcommand: /sbin/restorecon -R /var/local/osd0/fsid.3553.tmp

[node3][WARNIN] command: Runningcommand: /usr/bin/chown -R ceph:ceph /var/local/osd0/fsid.3553.tmp

[node3][WARNIN] command: Runningcommand: /sbin/restorecon -R /var/local/osd0/magic.3553.tmp

[node3][WARNIN] command: Runningcommand: /usr/bin/chown -R ceph:ceph /var/local/osd0/magic.3553.tmp

[node3][INFO ] checking OSD status...

[node3][DEBUG ] find the location ofan executable

[node3][INFO ] Running command: sudo /bin/ceph--cluster=ceph osd stat --format=json

[ceph_deploy.osd][DEBUG ] Host node3is now ready for osd use.

[ceph@node0 cluster]$

2.1.2 激活OSD

命令:

ceph-deploy osd activate{ceph-node}:/path/to/directory

示例:

[ceph@node0 cluster]$ ceph-deployosd activate node2:/var/local/osd0 node3:/var/local/osd0

查询状态:

[ceph@node1 ~]$ ceph -s

cluster 4f8f6c46-9f67-4475-9cb5-52cafecb3e4c

health HEALTH_WARN

64 pgs degraded

64 pgs stuck unclean

64 pgs undersized

mon.node2 low disk space

mon.node3 low disk space

monmap e1: 3 mons at{node1=192.168.92.101:6789/0,node2=192.168.92.102:6789/0,node3=192.168.92.103:6789/0}

election epoch 18, quorum 0,1,2node1,node2,node3

osdmap e12: 3 osds: 3 up, 3 in

flags sortbitwise

pgmap v173: 64 pgs, 1 pools, 0 bytes data, 0 objects

20254 MB used, 22120 MB / 42374 MBavail

64 active+undersized+degraded

[ceph@node1 ~]$

2.1.2.1creatingempty object store in *: (13) Permission denied

错误日志:

[node2][WARNIN]ceph_disk.main.Error: Error: ['ceph-osd', '--cluster', 'ceph', '--mkfs','--mkkey', '-i', '0', '--monmap', '/var/local/osd0/activate.monmap','--osd-data', '/var/local/osd0','--osd-journal', '/var/local/osd0/journal','--osd-uuid', '76f06d28-7e0d-4894-8625-4f55d43962bf', '--keyring', '/var/local/osd0/keyring','--setuser', 'ceph', '--setgroup', 'ceph'] failed : 2016-06-24 15:31:39.9318257fd1150c1800 -1 filestore(/var/local/osd0)mkfs: write_version_stamp() failed:(13) Permission denied

[node2][WARNIN] 2016-06-2415:31:39.931861 7fd1150c1800 -1 OSD::mkfs: ObjectStore::mkfs failed with error-13

[node2][WARNIN]2016-06-24 15:31:39.932024 7fd1150c1800 -1 ** ERROR: error creating empty object store in /var/local/osd0: (13)Permission denied

[node2][WARNIN]

[node2][ERROR] RuntimeError: command returned non-zero exit status: 1

[ceph_deploy][ERROR] RuntimeError: Failed to execute command: /usr/sbin/ceph-disk -v activate--mark-init systemd --mount /var/local/osd0

解决方案:

办法很简单,将ceph集群需要使用的所有磁盘权限,所属用户、用户组改给ceph

chownceph:ceph /var/local/osd0

[ceph@node0 cluster]$ssh node2"sudo chown ceph:ceph /var/local/osd0"

[ceph@node0 cluster]$ssh node3"sudo chown ceph:ceph /var/local/osd0"

问题延伸:

此问题本次修复后,系统重启磁盘权限会被修改回,导致osd服务无法正常启动,这个权限问题很坑,写了个for 循环,加入到rc.local,每次系统启动自动修改磁盘权限;

for i in a b c d e f g h i l j k;dochown ceph.ceph /dev/sd"$i"*;done

2.1.3 实例/dev/sdb

2.1.3.1 初始化

[ceph@node0 cluster]$ ceph-deployosd prepare node1:/dev/sdb

[ceph_deploy.conf][DEBUG ] foundconfiguration file at: /home/ceph/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (1.5.34): /usr/bin/ceph-deploy osdprepare node1:/dev/sdb

[ceph_deploy.cli][INFO ] ceph-deploy options:

[ceph_deploy.cli][INFO ] username :None

[ceph_deploy.cli][INFO ] disk :[('node1', '/dev/sdb', None)]

[ceph_deploy.cli][INFO ] dmcrypt :False

[ceph_deploy.cli][INFO ] verbose :False

[ceph_deploy.cli][INFO ] bluestore :None

[ceph_deploy.cli][INFO ] overwrite_conf :False

[ceph_deploy.cli][INFO ] subcommand :prepare

[ceph_deploy.cli][INFO ] dmcrypt_key_dir :/etc/ceph/dmcrypt-keys

[ceph_deploy.cli][INFO ] quiet : False

[ceph_deploy.cli][INFO ] cd_conf :<ceph_deploy.conf.cephdeploy.Conf instance at 0x1acfdd0>

[ceph_deploy.cli][INFO ] cluster :ceph

[ceph_deploy.cli][INFO ] fs_type : xfs

[ceph_deploy.cli][INFO ] func :<function osd at 0x1ac4398>

[ceph_deploy.cli][INFO ] ceph_conf :None

[ceph_deploy.cli][INFO ] default_release :False

[ceph_deploy.cli][INFO ] zap_disk :False

[ceph_deploy.osd][DEBUG ] Preparingcluster ceph disks node1:/dev/sdb:

[node1][DEBUG ] connection detectedneed for sudo

[node1][DEBUG ] connected to host:node1

[node1][DEBUG ] detect platforminformation from remote host

[node1][DEBUG ] detect machine type

[node1][DEBUG ] find the location ofan executable

[ceph_deploy.osd][INFO ] Distro info: CentOS Linux 7.2.1511 Core

[ceph_deploy.osd][DEBUG ] Deployingosd to node1

[node1][DEBUG ] write clusterconfiguration to /etc/ceph/{cluster}.conf

[ceph_deploy.osd][DEBUG ] Preparinghost node1 disk /dev/sdb journal None activate False

[node1][DEBUG ] find the location ofan executable

[node1][INFO ] Running command: sudo /usr/sbin/ceph-disk-v prepare --cluster ceph --fs-type xfs -- /dev/sdb

[node1][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --cluster=ceph --show-config-value=fsid

[node1][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --check-allows-journal -i 0 --cluster ceph

[node1][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --check-wants-journal -i 0 --cluster ceph

[node1][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --check-needs-journal -i 0 --cluster ceph

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] set_type: Willcolocate journal with data on /dev/sdb

[node1][WARNIN] command: Runningcommand: /usr/bin/ceph-osd --cluster=ceph --show-config-value=osd_journal_size

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] command: Runningcommand: /usr/bin/ceph-conf --cluster=ceph --name=osd. --lookuposd_mkfs_options_xfs

[node1][WARNIN] command: Runningcommand: /usr/bin/ceph-conf --cluster=ceph --name=osd. --lookuposd_fs_mkfs_options_xfs

[node1][WARNIN] command: Runningcommand: /usr/bin/ceph-conf --cluster=ceph --name=osd. --lookuposd_mount_options_xfs

[node1][WARNIN] command: Runningcommand: /usr/bin/ceph-conf --cluster=ceph --name=osd. --lookuposd_fs_mount_options_xfs

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] ptype_tobe_for_name:name = journal

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] create_partition:Creating journal partition num 2 size 5120 on /dev/sdb

[node1][WARNIN] command_check_call:Running command: /sbin/sgdisk --new=2:0:+5120M --change-name=2:ceph journal--partition-guid=2:ddc560cc-f7b8-40fb-8f19-006ae2ef03a2 --typecode=2:45b0969e-9b03-4f30-b4c6-b4b80ceff106--mbrtogpt-- /dev/sdb

[node1][DEBUG ] Creating new GPTentries.

[node1][DEBUG ] The operation hascompleted successfully.

[node1][WARNIN] update_partition:Calling partprobe on created device /dev/sdb

[node1][WARNIN] command_check_call:Running command: /usr/bin/udevadm settle --timeout=600

[node1][WARNIN] command: Runningcommand: /sbin/partprobe /dev/sdb

[node1][WARNIN] command_check_call:Running command: /usr/bin/udevadm settle --timeout=600

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb2 uuid path is /sys/dev/block/8:18/dm/uuid

[node1][WARNIN] prepare_device:Journal is GPT partition/dev/disk/by-partuuid/ddc560cc-f7b8-40fb-8f19-006ae2ef03a2

[node1][WARNIN] prepare_device:Journal is GPT partition/dev/disk/by-partuuid/ddc560cc-f7b8-40fb-8f19-006ae2ef03a2

[node1][WARNIN] get_dm_uuid: get_dm_uuid/dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] set_data_partition:Creating osd partition on /dev/sdb

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] ptype_tobe_for_name:name = data

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] create_partition:Creating data partition num 1 size 0 on /dev/sdb

[node1][WARNIN] command_check_call:Running command: /sbin/sgdisk --largest-new=1 --change-name=1:ceph data--partition-guid=1:805bfdb4-97b8-48e7-a42e-a734a47aa533--typecode=1:89c57f98-2fe5-4dc0-89c1-f3ad0ceff2be--mbrtogpt -- /dev/sdb

[node1][DEBUG ] The operation hascompleted successfully.

[node1][WARNIN] update_partition:Calling partprobe on created device /dev/sdb

[node1][WARNIN] command_check_call:Running command: /usr/bin/udevadm settle --timeout=600

[node1][WARNIN] command: Runningcommand: /sbin/partprobe /dev/sdb

[node1][WARNIN] command_check_call:Running command: /usr/bin/udevadm settle --timeout=600

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb1 uuid path is /sys/dev/block/8:17/dm/uuid

[node1][WARNIN]populate_data_path_device: Creating xfs fs on /dev/sdb1

[node1][WARNIN] command_check_call:Running command: /sbin/mkfs -t xfs -f -i size=2048 -- /dev/sdb1

[node1][DEBUG ]meta-data=/dev/sdb1 isize=2048 agcount=4,agsize=982975 blks

[node1][DEBUG ] = sectsz=512 attr=2, projid32bit=1

[node1][DEBUG ] = crc=0 finobt=0

[node1][DEBUG ] data = bsize=4096 blocks=3931899, imaxpct=25

[node1][DEBUG ] = sunit=0 swidth=0 blks

[node1][DEBUG ] naming =version 2 bsize=4096 ascii-ci=0 ftype=0

[node1][DEBUG ] log =internal log bsize=4096 blocks=2560, version=2

[node1][DEBUG ] = sectsz=512 sunit=0 blks, lazy-count=1

[node1][DEBUG ] realtime =none extsz=4096 blocks=0, rtextents=0

[node1][WARNIN] mount: Mounting/dev/sdb1 on /var/lib/ceph/tmp/mnt.9sdF7v with options noatime,inode64

[node1][WARNIN] command_check_call:Running command: /usr/bin/mount -t xfs -o noatime,inode64 -- /dev/sdb1/var/lib/ceph/tmp/mnt.9sdF7v

[node1][WARNIN] command: Running command:/sbin/restorecon /var/lib/ceph/tmp/mnt.9sdF7v

[node1][WARNIN] populate_data_path:Preparing osd data dir /var/lib/ceph/tmp/mnt.9sdF7v

[node1][WARNIN] command: Runningcommand: /sbin/restorecon -R /var/lib/ceph/tmp/mnt.9sdF7v/ceph_fsid.5102.tmp

[node1][WARNIN] command: Runningcommand: /usr/bin/chown -R ceph:ceph/var/lib/ceph/tmp/mnt.9sdF7v/ceph_fsid.5102.tmp

[node1][WARNIN] command: Runningcommand: /sbin/restorecon -R /var/lib/ceph/tmp/mnt.9sdF7v/fsid.5102.tmp

[node1][WARNIN] command: Runningcommand: /usr/bin/chown -R ceph:ceph /var/lib/ceph/tmp/mnt.9sdF7v/fsid.5102.tmp

[node1][WARNIN] command: Runningcommand: /sbin/restorecon -R /var/lib/ceph/tmp/mnt.9sdF7v/magic.5102.tmp

[node1][WARNIN] command: Runningcommand: /usr/bin/chown -R ceph:ceph /var/lib/ceph/tmp/mnt.9sdF7v/magic.5102.tmp

[node1][WARNIN] command: Runningcommand: /sbin/restorecon -R /var/lib/ceph/tmp/mnt.9sdF7v/journal_uuid.5102.tmp

[node1][WARNIN] command: Runningcommand: /usr/bin/chown -R ceph:ceph /var/lib/ceph/tmp/mnt.9sdF7v/journal_uuid.5102.tmp

[node1][WARNIN] adjust_symlink:Creating symlink /var/lib/ceph/tmp/mnt.9sdF7v/journal ->/dev/disk/by-partuuid/ddc560cc-f7b8-40fb-8f19-006ae2ef03a2

[node1][WARNIN] command: Runningcommand: /sbin/restorecon -R /var/lib/ceph/tmp/mnt.9sdF7v

[node1][WARNIN] command: Runningcommand: /usr/bin/chown -R ceph:ceph /var/lib/ceph/tmp/mnt.9sdF7v

[node1][WARNIN] unmount: Unmounting/var/lib/ceph/tmp/mnt.9sdF7v

[node1][WARNIN] command_check_call:Running command: /bin/umount -- /var/lib/ceph/tmp/mnt.9sdF7v

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] command_check_call:Running command: /sbin/sgdisk --typecode=1:4fbd7e29-9d25-41b8-afd0-062c0ceff05d-- /dev/sdb

[node1][DEBUG ] Warning: The kernelis still using the old partition table.

[node1][DEBUG ] The new table willbe used at the next reboot.

[node1][DEBUG ] The operation hascompleted successfully.

[node1][WARNIN] update_partition:Calling partprobe on prepared device /dev/sdb

[node1][WARNIN] command_check_call:Running command: /usr/bin/udevadm settle --timeout=600

[node1][WARNIN] command: Runningcommand: /sbin/partprobe /dev/sdb

[node1][WARNIN] command_check_call:Running command: /usr/bin/udevadm settle --timeout=600

[node1][WARNIN] command_check_call:Running command: /usr/bin/udevadm trigger --action=add --sysname-match sdb1

[node1][INFO ] checking OSD status...

[node1][DEBUG ] find the location ofan executable

[node1][INFO ] Running command: sudo /bin/ceph--cluster=ceph osd stat --format=json

[node1][WARNIN] there is 1 OSD down

[node1][WARNIN] there is 1 OSD out

[ceph_deploy.osd][DEBUG ] Host node1is now ready for osd use.

[ceph@node0 cluster]$

2.1.3.2激活

2.1.4 Cannot discover filesystemtype

[ceph_deploy.osd][DEBUG ] will useinit type: systemd

[node1][DEBUG ] find the location ofan executable

[node1][INFO ] Running command: sudo /usr/sbin/ceph-disk-v activate --mark-init systemd --mount /dev/sdb

[node1][WARNIN] main_activate: path= /dev/sdb

[node1][WARNIN] get_dm_uuid:get_dm_uuid /dev/sdb uuid path is /sys/dev/block/8:16/dm/uuid

[node1][WARNIN] command: Runningcommand: /sbin/blkid -p -s TYPE -o value -- /dev/sdb

[node1][WARNIN] Traceback (mostrecent call last):

[node1][WARNIN] File "/usr/sbin/ceph-disk", line9, in <module>

[node1][WARNIN] load_entry_point('ceph-disk==1.0.0','console_scripts', 'ceph-disk')()

[node1][WARNIN] File"/usr/lib/python2.7/site-packages/ceph_disk/main.py", line 4994, inrun

[node1][WARNIN] main(sys.argv[1:])

[node1][WARNIN] File "/usr/lib/python2.7/site-packages/ceph_disk/main.py",line 4945, in main

[node1][WARNIN] args.func(args)

[node1][WARNIN] File"/usr/lib/python2.7/site-packages/ceph_disk/main.py", line 3299, inmain_activate

[node1][WARNIN] reactivate=args.reactivate,

[node1][WARNIN] File"/usr/lib/python2.7/site-packages/ceph_disk/main.py", line 3009, inmount_activate

[node1][WARNIN] e,

[node1][WARNIN]ceph_disk.main.FilesystemTypeError: Cannot discover filesystem type: device/dev/sdb: Line is truncated:

[node1][ERROR ] RuntimeError:command returned non-zero exit status: 1

[ceph_deploy][ERROR ] RuntimeError:Failed to execute command: /usr/sbin/ceph-disk -v activate --mark-init systemd--mount /dev/sdb

2.2 删除OSD

[iyunv@ceph-osd-1 ceph-cluster]# cephauth del osd.5

updated

[iyunv@ceph-osd-1 ceph-cluster]# cephosd rm 5

removed osd.5

第3章 OSD操作

|