|

|

I test multicast on openstack. I use external Router in this test.

Openstack Environment:

Havana (ML2 + OVS)

Test Environment:

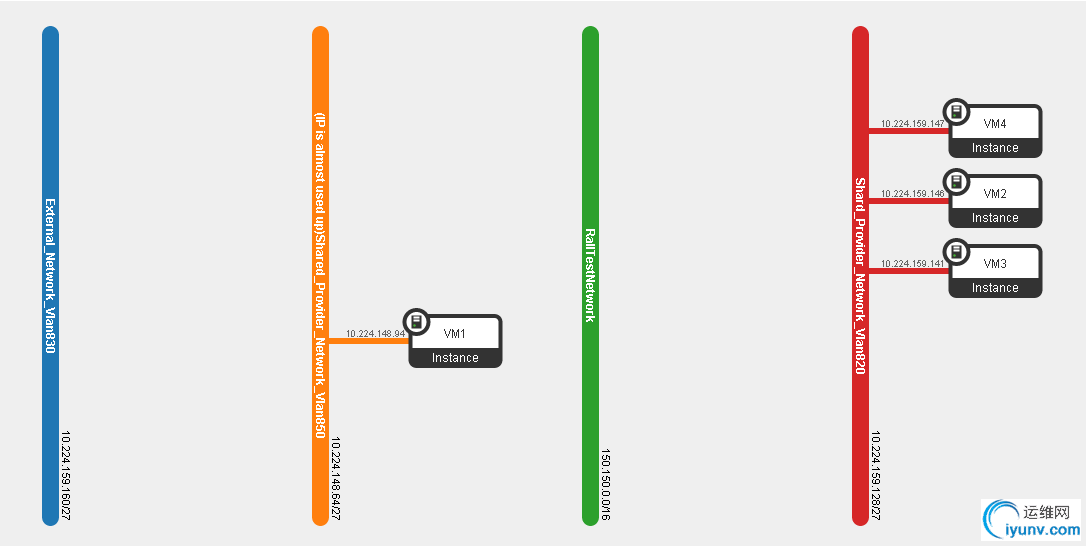

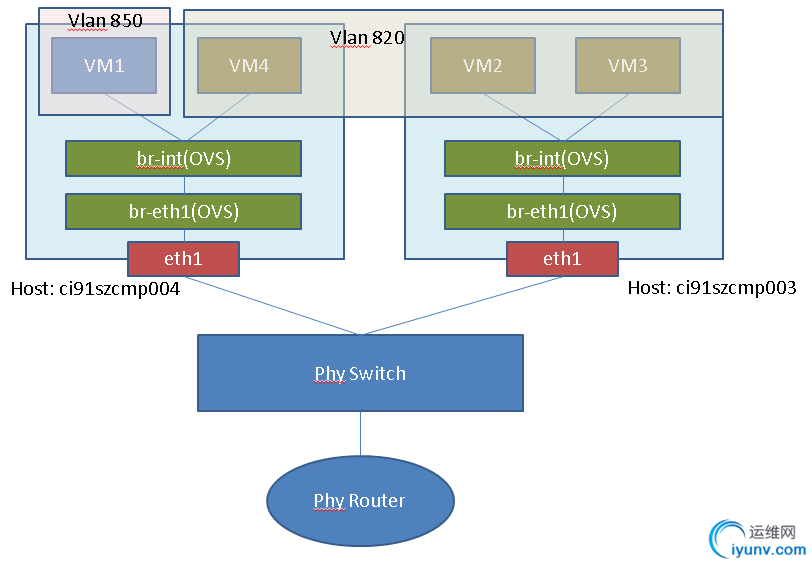

VM1 is in Vlan 850. VM2,VM3,VM4 are in Vlan 820.

VM2 and VM3 are on the same compute node ci91szcmp003 , VM1 and VM4 are on another compute node ci91szcmp004.

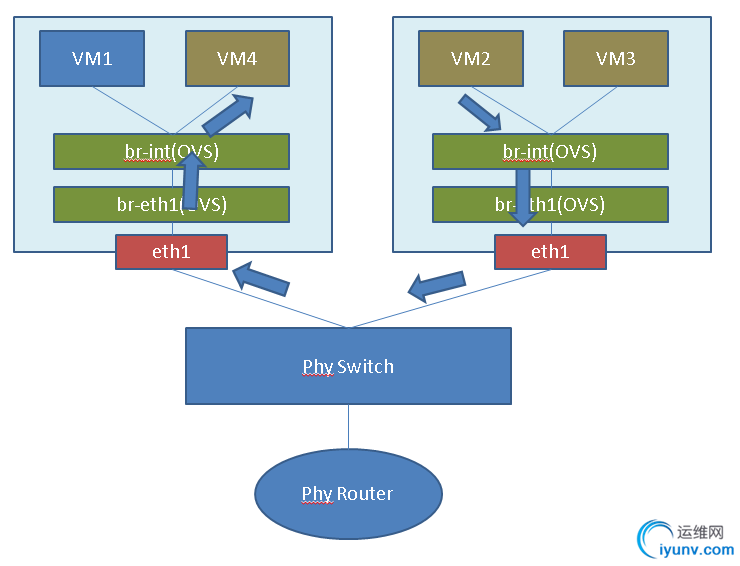

Here is the topology:

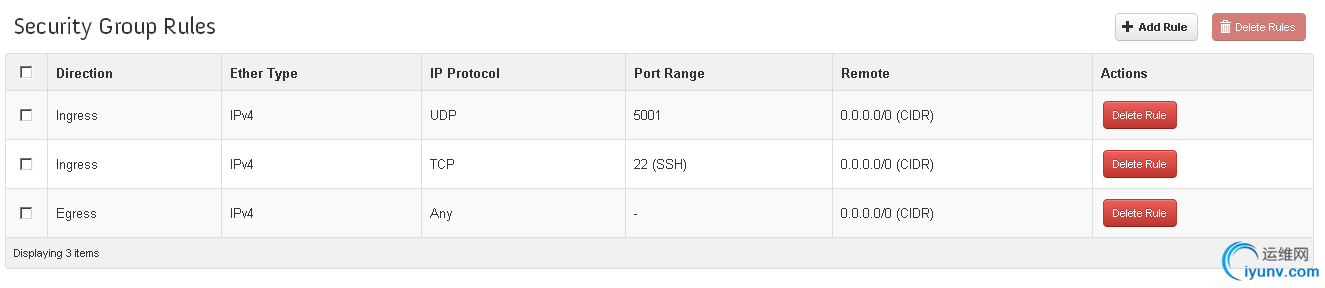

The security Group I'm using is as following, UDP 5001 is the port which I used to test Multicast Packet:

Test Tool:

I use iperf to test Multicast. You can easily install this tool on CentOS with the following command:

yum install iperf

If you do not know how to use this tool, you can see "man iperf" for more detail.

Simulate Multicast Sender with the following command:

iperf -c 224.1.1.1 -u -T 32 -t 3 -i 1

Simulate Multicast Receiver with the following command:

iperf -s -u -B 224.1.1.1 -i 1

Test Case and Result:

VMs in the same Vlan

Case #1:

VM2 send multicast packet. VM3 and VM4 do not join the Multicast Group, use tcpdump to see if they can receive Multicast packets.

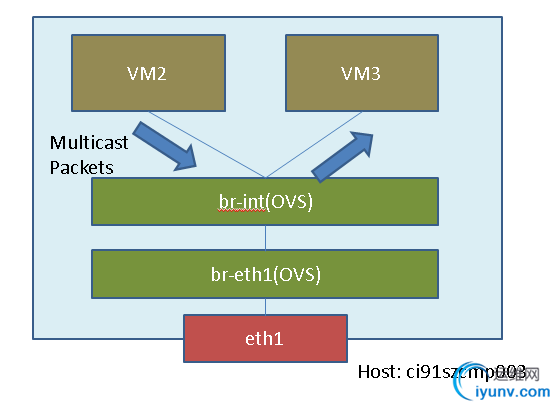

Multicast packet flow from VM2 to VM3 is VM2 -> OVS -> VM3, as following:

Multicast packet flow from VM2 to VM4 is VM2 -> OVS -> Phy Switch -> OVS -> VM3, as following:

Result and Log:

On VM2 I send multicast packet:

[iyunv@VM2 ~]# iperf -c 224.1.1.1 -u -T 32 -t 3 -i 1

------------------------------------------------------------

Client connecting to 224.1.1.1, UDP port 5001

Sending 1470 byte datagrams

Setting multicast TTL to 32

UDP buffer size: 224 KByte (default)

------------------------------------------------------------

[ 3] local 10.224.159.146 port 54457 connected with 224.1.1.1 port 5001

[ ID] Interval Transfer Bandwidth

[ 3] 0.0- 1.0 sec 129 KBytes 1.06 Mbits/sec

[ 3] 1.0- 2.0 sec 128 KBytes 1.05 Mbits/sec

[ 3] 2.0- 3.0 sec 128 KBytes 1.05 Mbits/sec

[ 3] 0.0- 3.0 sec 386 KBytes 1.05 Mbits/sec

[ 3] Sent 269 datagrams

On VM3, I did not join the Multicast Group and use tcpdump to dump packet:

[iyunv@VM3 ~]# netstat -g -n

IPv6/IPv4 Group Memberships

Interface RefCnt Group

--------------- ------ ---------------------

lo 1 224.0.0.1

eth0 1 224.0.0.1

[iyunv@VM3 ~]# tcpdump -i eth0 host 224.1.1.1

tcpdump: verbose output suppressed, use -v or -vv for full protocol decode

listening on eth0, link-type EN10MB (Ethernet), capture size 65535 bytes

04:45:59.213678 IP 10.224.159.146.54457 > 224.1.1.1.commplex-link: UDP, length 1470

04:45:59.224902 IP 10.224.159.146.54457 > 224.1.1.1.commplex-link: UDP, length 1470

04:45:59.236114 IP 10.224.159.146.54457 > 224.1.1.1.commplex-link: UDP, length 1470

04:45:59.247387 IP 10.224.159.146.54457 > 224.1.1.1.commplex-link: UDP, length 1470

04:45:59.258611 IP 10.224.159.146.54457 > 224.1.1.1.commplex-link: UDP, length 1470

04:45:59.269744 IP 10.224.159.146.54457 > 224.1.1.1.commplex-link: UDP, length 1470

04:45:59.281011 IP 10.224.159.146.54457 > 224.1.1.1.commplex-link: UDP, length 1470

...

On VM4, I did not join the Multicast Group and use tcpdump to dump packet:

[iyunv@VM4 ~]# netstat -g -n

IPv6/IPv4 Group Memberships

Interface RefCnt Group

--------------- ------ ---------------------

lo 1 224.0.0.1

eth0 1 224.0.0.1

[iyunv@VM4 ~]# tcpdump -i eth0 host 224.1.1.1

tcpdump: verbose output suppressed, use -v or -vv for full protocol decode

listening on eth0, link-type EN10MB (Ethernet), capture size 65535 bytes

We can see that VM2 and VM3 are in the same Vlan and on the same compute node. They are connected with Openvswitch.Openvswitch do not support Multicast snooping. So it will broadcast all the Multicast packets. So Even VM3 did not join the multicast

group, it can also receive the Multicast packets.And at the same time, We can see VM4 did not receive the Multicast packet because VM4 is on another Compute node. The two compute nodes are connected to a physical switch. And the physical Switch support igmp

snooping and it will not broadcast the multicast packet to other compute nodes.

Case #2:

VM2 send multicast packet. VM4 join the Multicast Group to see if it can receive Multicast packets.

Result and Log:

On VM2 I send multicast packet:

[iyunv@VM2 ~]# iperf -c 224.1.1.1 -u -T 32 -t 3 -i 1

------------------------------------------------------------

Client connecting to 224.1.1.1, UDP port 5001

Sending 1470 byte datagrams

Setting multicast TTL to 32

UDP buffer size: 224 KByte (default)

------------------------------------------------------------

[ 3] local 10.224.159.146 port 35844 connected with 224.1.1.1 port 5001

[ ID] Interval Transfer Bandwidth

[ 3] 0.0- 1.0 sec 129 KBytes 1.06 Mbits/sec

[ 3] 1.0- 2.0 sec 128 KBytes 1.05 Mbits/sec

[ 3] 2.0- 3.0 sec 128 KBytes 1.05 Mbits/sec

[ 3] 0.0- 3.0 sec 386 KBytes 1.05 Mbits/sec

[ 3] Sent 269 datagrams

On VM4 I receive multicast packet:

[iyunv@VM4 ~]# iperf -s -u -B 224.1.1.1 -i 1

------------------------------------------------------------

Server listening on UDP port 5001

Binding to local address 224.1.1.1

Joining multicast group 224.1.1.1

Receiving 1470 byte datagrams

UDP buffer size: 224 KByte (default)

------------------------------------------------------------

[ 3] local 224.1.1.1 port 5001 connected with 10.224.159.146 port 35844

[ ID] Interval Transfer Bandwidth Jitter Lost/Total Datagrams

[ 3] 0.0- 1.0 sec 128 KBytes 1.05 Mbits/sec 0.027 ms 0/ 89 (0%)

[ 3] 1.0- 2.0 sec 128 KBytes 1.05 Mbits/sec 0.026 ms 0/ 89 (0%)

[ 3] 2.0- 3.0 sec 128 KBytes 1.05 Mbits/sec 0.018 ms 0/ 89 (0%)

[ 3] 0.0- 3.0 sec 386 KBytes 1.05 Mbits/sec 0.019 ms 0/ 269 (0%)

[iyunv@VM4 ~]# netstat -n -g

IPv6/IPv4 Group Memberships

Interface RefCnt Group

--------------- ------ ---------------------

lo 1 224.0.0.1

eth0 1 224.1.1.1

eth0 1 224.0.0.1

We can see that VM2 and VM4 are in the same Vlan and on different compute nodes. After VM4 join the Multicast Group, VM2 send the packets and VM4 can receive the multicast packets.

VMs in different Vlan and use external Router

Case #3:

VM1 send Multicast packet. VM2 and VM4 do not join the Multicast Group, tcpdump to see if they can receive Multicast packets.

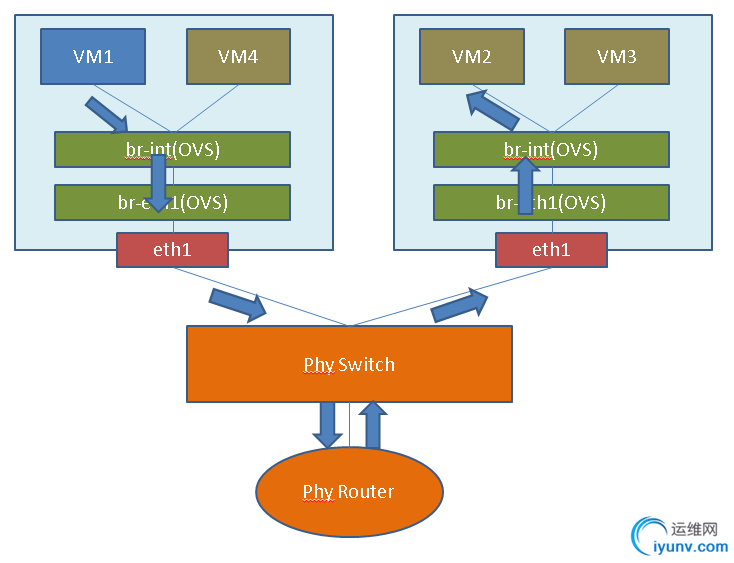

Multicast packet flow from VM1 to VM2 is VM1 -> OVS -> Phy Switch -> Phy Router -> Phy Switch -> OVS -> VM2, as following:

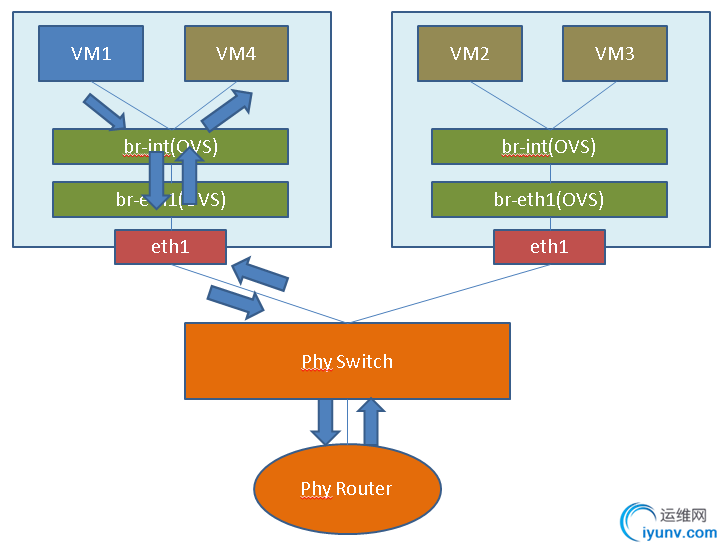

Multicast packet flow from VM1 to VM4 is VM1 -> OVS -> Phy Switch -> Phy Router -> Phy Switch -> OVS -> VM4, as following:

Result and Log:

[iyunv@VM1 ~]# iperf -c 224.1.1.1 -u -T 32 -t 3 -i 1

------------------------------------------------------------

Client connecting to 224.1.1.1, UDP port 5001

Sending 1470 byte datagrams

Setting multicast TTL to 32

UDP buffer size: 224 KByte (default)

------------------------------------------------------------

[ 3] local 10.224.148.94 port 60820 connected with 224.1.1.1 port 5001

[ ID] Interval Transfer Bandwidth

[ 3] 0.0- 1.0 sec 129 KBytes 1.06 Mbits/sec

[ 3] 1.0- 2.0 sec 128 KBytes 1.05 Mbits/sec

[ 3] 2.0- 3.0 sec 128 KBytes 1.05 Mbits/sec

[ 3] 0.0- 3.0 sec 386 KBytes 1.05 Mbits/sec

[ 3] Sent 269 datagrams

[iyunv@VM2 ~]# netstat -n -g

IPv6/IPv4 Group Memberships

Interface RefCnt Group

--------------- ------ ---------------------

lo 1 224.0.0.1

eth0 1 224.0.0.1

[iyunv@VM2 ~]# tcpdump -i eth0 host 224.1.1.1

tcpdump: verbose output suppressed, use -v or -vv for full protocol decode

listening on eth0, link-type EN10MB (Ethernet), capture size 65535 bytes

[iyunv@VM4 ~]# netstat -g -n

IPv6/IPv4 Group Memberships

Interface RefCnt Group

--------------- ------ ---------------------

lo 1 224.0.0.1

eth0 1 224.0.0.1

[iyunv@VM4 ~]# tcpdump -i eth0 host 224.1.1.1

tcpdump: verbose output suppressed, use -v or -vv for full protocol decode

listening on eth0, link-type EN10MB (Ethernet), capture size 65535 bytes

Case #4:

VM1 send Multicast packet. VM2 join the Multicast Group to see if it can receive Multicast packets.

Result and Log:

[iyunv@VM1 ~]# iperf -c 224.1.1.1 -u -T 32 -t 3 -i 1

------------------------------------------------------------

Client connecting to 224.1.1.1, UDP port 5001

Sending 1470 byte datagrams

Setting multicast TTL to 32

UDP buffer size: 224 KByte (default)

------------------------------------------------------------

[ 3] local 10.224.148.94 port 41301 connected with 224.1.1.1 port 5001

[ ID] Interval Transfer Bandwidth

[ 3] 0.0- 1.0 sec 129 KBytes 1.06 Mbits/sec

[ 3] 1.0- 2.0 sec 128 KBytes 1.05 Mbits/sec

[ 3] 2.0- 3.0 sec 128 KBytes 1.05 Mbits/sec

[ 3] 0.0- 3.0 sec 386 KBytes 1.05 Mbits/sec

[ 3] Sent 269 datagrams

[iyunv@VM2 ~]# iperf -s -u -B 224.1.1.1 -i 1

------------------------------------------------------------

Server listening on UDP port 5001

Binding to local address 224.1.1.1

Joining multicast group 224.1.1.1

Receiving 1470 byte datagrams

UDP buffer size: 224 KByte (default)

------------------------------------------------------------

[ 3] local 224.1.1.1 port 5001 connected with 10.224.148.94 port 41301

[ ID] Interval Transfer Bandwidth Jitter Lost/Total Datagrams

[ 3] 0.0- 1.0 sec 128 KBytes 1.05 Mbits/sec 0.029 ms 0/ 89 (0%)

[ 3] 1.0- 2.0 sec 128 KBytes 1.05 Mbits/sec 0.031 ms 0/ 89 (0%)

[ 3] 2.0- 3.0 sec 128 KBytes 1.05 Mbits/sec 0.025 ms 0/ 89 (0%)

[ 3] 0.0- 3.0 sec 386 KBytes 1.05 Mbits/sec 0.025 ms 0/ 269 (0%)

[iyunv@VM2 ~]# netstat -g -n

IPv6/IPv4 Group Memberships

Interface RefCnt Group

--------------- ------ ---------------------

lo 1 224.0.0.1

eth0 1 224.1.1.1

eth0 1 224.0.0.1

Conclusion:

1. Openvswitch do not support IGMP snooping, and if the VM on one compute node send multicast packets, all the VM on the same compute and in the same Vlan will receive the multicast packets. This may have some performance loss

here. As I know, Cisco N1K can support IGMP snooping. If we use N1K can get better performance here.

2. If VMs use External Router, we need config the External Router to support IGMP, and in this situation the Multicast can work well in Openstack. If you use neutron-l3-agent, it will use iptables + namespace to simulate Virtual Router, it does

not support Multicast now. |

|