|

|

|

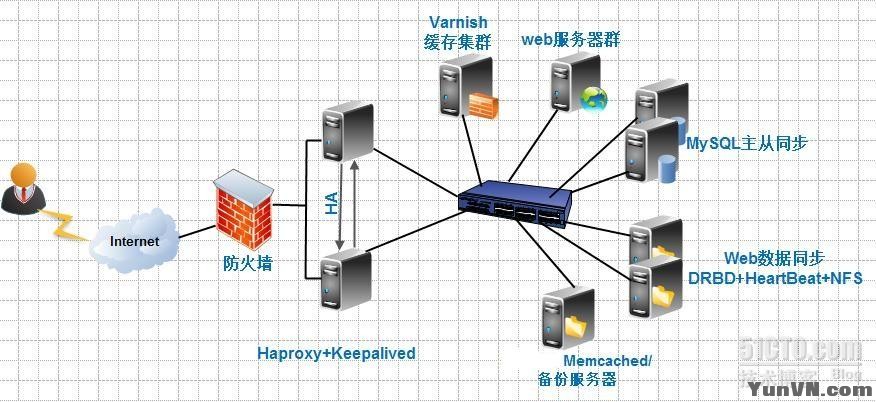

企业web高可用集群实战之haproxy篇 By:opsren 2012.6.15

本实验环境所用域名:www.opsren.com

下面是架构图:

整个实验只是详细说明架构环境的搭建,不会过多讲解各应用软件的原理性东西!

系统初使化—请参考:

http://linuxops.blog./2238445/841849

第一部分:harproxy+keepalived部署

在192.168.8.10和192.168.8.11上操作!!!!!!!

HAProxy是linux平台上的负载均衡软件,有完善的服务器健康检测和会话(session)保持功能,性能高,支持tcp和http网络连接分发。

下载软件:

[iyunv@haproxy1 ~]# cd /usr/local/src

[iyunv@haproxy1 src]# wget http://haproxy.1wt.eu/download/1.4/src/haproxy-1.4.19.tar.gz

[iyunv@haproxy1 src]# wget http://keepalived.org/software/keepalived-1.2.2.tar.gz

一、安装keepalived(主备略有不同,配置中有说明)

[iyunv@haproxy1 ~]# cd /usr/local/src

[iyunv@haproxy1 src]# tar zxf keepalived-1.2.2.tar.gz

[iyunv@haproxy1 src]# cd keepalived-1.2.2

[iyunv@haproxy1 keepalived-1.2.2]# ./configure

[iyunv@haproxy1 keepalived-1.2.2]# make

[iyunv@haproxy1 keepalived-1.2.2]# make install

[iyunv@haproxy1 keepalived-1.2.2]# cp /usr/local/etc/rc.d/init.d/keepalived /etc/rc.d/init.d/

[iyunv@haproxy1 keepalived-1.2.2]# cp /usr/local/etc/sysconfig/keepalived /etc/sysconfig/

[iyunv@haproxy1 keepalived-1.2.2]# mkdir /etc/keepalived

[iyunv@haproxy1 keepalived-1.2.2]# cp /usr/local/sbin/keepalived /usr/sbin/

[iyunv@haproxy1 keepalived-1.2.2]#

[iyunv@haproxy1 keepalived-1.2.2]# vi /etc/keepalived/keepalived.conf

加入如下内容:

- ! Configuration File for keepalived

-

- global_defs {

- notification_email {

- qzjzhijun@163.com

- }

- notification_email_from qzjzhijun@163.com

- smtp_server smtp.163.com

- # smtp_connect_timeout 30

- router_id LVS_DEVEL

- }

-

- # VIP1

- vrrp_instance VI_1 {

- state MASTER #备份服务器上将MASTER改为BACKUP

-

- interface eth0

- lvs_sync_daemon_inteface eth0

- virtual_router_id 51

- priority 100 # 备份服务上将100改为90

- advert_int 5

- authentication {

- auth_type PASS

- auth_pass 1111

- }

- virtual_ipaddress {

- 192.168.8.12

- }

- }

-

- virtual_server 192.168.8.12 80 {

- delay_loop 6 #(每隔10秒查询realserver状态)

- lb_algo wlc #(lvs 算法)

- lb_kind DR #(Direct Route)

- persistence_timeout 60 #(同一IP的连接60秒内被分配到同一台realserver)

- protocol TCP #(用TCP协议检查realserver状态)

-

- real_server 192.168.8.20 80 {

- weight 100 #(权重)

- TCP_CHECK {

- connect_timeout 10 #(10秒无响应超时)

- nb_get_retry 3

- delay_before_retry 3

- connect_port 80

- }

- real_server 192.168.8.21 80 {

- weight 100

- TCP_CHECK {

- connect_timeout 10

- nb_get_retry 3

- delay_before_retry 3

- connect_port 80

- }

二、安装haproxy(主备都一样)

[iyunv@haproxy1 ~]# cd /usr/local/src

[iyunv@haproxy1 src]# tar zxf haproxy-1.4.19.tar.gz

[iyunv@haproxy1 src]# cd haproxy-1.4.19

[iyunv@haproxy1 haproxy-1.4.19]# make TARGET=linux26 PREFIX=/usr/local/haproxy

[iyunv@haproxy1 haproxy-1.4.19]# make install PREFIX=/usr/local/haproxy

创建配置文件

[iyunv@haproxy1 haproxy-1.4.19]# cd /usr/local/haproxy

[iyunv@haproxy1 haproxy]# vi haproxy.conf

加入如下内容:

- global

- maxconn 4096

- chroot /usr/local/haproxy

- uid 188

- gid 188

- daemon

- quiet

- nbproc 2

- pidfile /usr/local/haproxy/haproxy.pid

- defaults

- log global

- mode http

- option httplog

- option dontlognull

- log 127.0.0.1 local3

- retries 3

- option redispatch

- maxconn 20000

- contimeout 5000

- clitimeout 50000

- srvtimeout 50000

- listen www.opsren.com 0.0.0.0:80

- stats uri /status

- stats realm Haproxy\ statistics

- stats auth admin:admin

- balance source

- option httpclose

- option forwardfor

- #option httpchk HEAD /index.php HTTP/1.0

- server cache1_192.168.8.20 192.168.8.20:80 cookie app1inst1 check inter 2000 rise 2 fall 5

- server cache2_192.168.8.21 192.168.8.21:80 cookie app1inst2 check inter 2000 rise 2 fall 5

或采用下面这种模式:

- global

- log 127.0.0.1 local3

- maxconn 4096

- chroot /usr/local/haproxy

- uid 188

- gid 188

- daemon

- quiet

- nbproc 2

- pidfile /usr/local/haproxy/haproxy.pid

- defaults

- log global

- mode http

- retries 3

- option redispatch

- maxconn 20000

- stats enable

- stats hide-version

- stats uri /status

- contimeout 5000

- clitimeout 50000

- srvtimeout 50000

-

- frontend www.opsren.com

- bind *:80

- mode http

- option httplog

- log global

- default_backend php_opsren

-

- backend php_opsren

- balance source

- #option httpclose

- #option forwardfor

- server cache1_192.168.8.20 192.168.8.20:80 cookie app1inst1 check inter 2000 rise 2 fall 5

- server cache2_192.168.8.21 192.168.8.21:80 cookie app1inst2 check inter 2000 rise 2 fall 5

至于有朋友问到这两种模式有什么区别,本人暂时发现区别主要是第二种方法有以下两点好处。

1.3版本引入了frontend,backend 前后端模式;frontend根据任意 HTTP请求头内容做规则匹配,然后把请求定向到相关的backend.主要表现在以下两个方面:

1.可以利用haproxy的正则实现动静分离

2.可以根据不同类型的访问请求转发到不同的访问池:比较针对PHP和JSP的访问等

三、启动haproxy

正常启动haproxy:

[iyunv@haproxy1 ~]# /usr/local/haproxy/sbin/haproxy -f /usr/local/haproxy/haproxy.conf

关闭:

[iyunv@haproxy1 ~]# pkill -9 haproxy

这样启动不够方便,我们可以设置alias

alias haproxyd=’ /usr/local/haproxy/sbin/haproxy -f /usr/local/haproxy/haproxy.conf’

我们也可以把它写到/root/.bashrc、/etc/bashrc中!

也可以使用启动、关闭脚本:

[iyunv@haproxy1 ~]# cat /etc/init.d/haproxy

- #!/bin/bash

- # chkconfig 35 on

- # description: HAProxy is a TCP/HTTP reverse proxy which is particularly suited for high availability environments.

- # Source function library.

- if [ -f /etc/init.d/functions ]; then

- . /etc/init.d/functions

- elif [ -f /etc/rc.d/init.d/functions ] ; then

- . /etc/rc.d/init.d/functions

- else

- exit 0

- fi

-

- # Source networking configuration.

- . /etc/sysconfig/network

-

- # Check that networking is up.

- [ ${NETWORKING} = "no" ] && exit 0

-

- [ -f /usr/local/haproxy/haproxy.conf ] || exit 1

-

- RETVAL=0

-

- start() {

- /usr/local/haproxy/sbin/haproxy -c -q -f /usr/local/haproxy/haproxy.conf

- if [ $? -ne 0 ]; then

- echo "Errors found in configuration file."

- return 1

- fi

-

- echo -n "Starting HAproxy: "

- daemon /usr/local/haproxy/sbin/haproxy -D -f /usr/local/haproxy/haproxy.conf -p /var/run/haproxy.pid

- RETVAL=$?

- echo

- [ $RETVAL -eq 0 ] && touch /var/lock/subsys/haproxy

- return $RETVAL

- }

-

- stop() {

- echo -n "Shutting down HAproxy: "

- killproc haproxy -USR1

- RETVAL=$?

- echo

- [ $RETVAL -eq 0 ] && rm -f /var/lock/subsys/haproxy

- [ $RETVAL -eq 0 ] && rm -f /var/run/haproxy.pid

- return $RETVAL

- }

-

- restart() {

- /usr/local/haproxy/sbin/haproxy -c -q -f /usr/local/haproxy/haproxy.conf

- if [ $? -ne 0 ]; then

- echo "Errors found in configuration file, check it with 'haproxy check'."

- return 1

- fi

- stop

- start

- }

-

- check() {

- /usr/local/haproxy/sbin/haproxy -c -q -V -f /usr/local/haproxy/haproxy.conf

- }

-

- rhstatus() {

- status haproxy

- }

-

- condrestart() {

- [ -e /var/lock/subsys/haproxy ] && restart || :

- }

-

- # See how we were called.

- case "$1" in

- start)

- start

- ;;

- stop)

- stop

- ;;

- restart)

- restart

- ;;

- reload)

- restart

- ;;

- condrestart)

- condrestart

- ;;

- status)

- rhstatus

- ;;

- check)

- check

- ;;

- *)

- echo $"Usage: haproxy {start|stop|restart|reload|condrestart|status|check}"

- RETVAL=1

- esac

-

- exit $RETVAL

chmod +x /etc/init.d/haproxy

这样我们可以通过:/etc/init.d/haproxy start|restart|stop 来启动和关闭!

以上就是关于关闭和启动的方法,大家可以根据自己的爱好来选择!

到此,整个haproxy+keepalived架构已部署完毕!下面接着部署varnish集群架构!

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

第二部分:varnish集群部署

在192.168.8.20 和 192.168.81.21 上操作!!!

一、varnish服务器安装

安装varnish之前必须要先安装PCRE等一些依赖包

[iyunv@varnish1 ~]# yum install -y automake autoconf libtool ncurses-devel libxslt groff pcre-devel pkgconfig

下载varnish软件(此架构用的是3.0.2最新版本)

[iyunv@varnish1 ~]# wget http://repo.varnish-cache.org/source/varnish-3.0.2.tar.gz

[iyunv@varnish1 ~]# tar zxvf varnish-3.0.2.tar.gz

[iyunv@varnish1 ~]# cd varnish-3.0.2

[iyunv@varnish1 varnish-3.0.2]# ./configure --prefix=/usr/local/varnish

[iyunv@varnish1 varnish-3.0.2]# make; make install

二、创建配置文件

在各节点上设置hosts

[iyunv@varnish1 ~]# vi /etc/hosts

加入如下内容:

192.168.8.30 www.opsren.com

192.168.8.31 www.opsren.com

[iyunv@varnish1 ~]# groupadd www

[iyunv@varnish1 ~]# useradd www -g www -s /sbin/nologin

[iyunv@varnish1 ~]# mkdir -p /data/varnish/{cache,logs}

[iyunv@varnish1 ~]# chmod +w /data/varnish/{cache,logs}

[iyunv@varnish1 ~]# chown -R www:www /data/varnish/{cache,logs}

[iyunv@varnish1 ~]# vim /usr/local/varnish/etc/varnish/vcl.conf

- #Cache for opsren sites

- #backend vhost

- backend opsren1 {

- .host = "192.168.8.30";

- .port = "80";

- }

-

- backend opsren2 {

- .host = "192.168.8.31";

- .port = "80";

- }

- director webserver random {

- {.backend = opsren1; .weight = 5; }

- {.backend = opsren2; .weight = 8; }

- }

- #acl

- acl purge {

- "localhost";

- "127.0.0.1";

- "192.168.0.0"/24;

- }

- sub vcl_recv {

- if (req.http.Accept-Encoding) {

- if (req.url ~ "\.(jpg|png|gif|jpeg|flv)$" ) {

- remove req.http.Accept-Encoding;

- remove req.http.Cookie;

- } else if (req.http.Accept-Encoding ~ "gzip") {

- set req.http.Accept-Encoding = "gzip";

- } else if (req.http.Accept-Encoding ~ "deflate") {

- set req.http.Accept-Encoding = "deflate";

- } else {

- remove req.http.Accept-Encoding;

- }

- }

- if (req.http.host ~ "(.*)opsren.com") {

- set req.backend = webserver;

- }

- else {

- error 404 "This website is maintaining or not exist!";

- }

- if (req.request == "PURGE") {

- if (!client.ip ~purge) {

- error 405 "Not Allowed";

- }

-

- return(lookup);

- }

-

- if (req.request == "GET"&& req.url ~ "\.(png|gif|jpeg|jpg|ico|swf|css|js|html|htm|gz|tgz|bz2|tbz|mp3|ogg|mp4|flv|f4v|pdf)$") {

- unset req.http.cookie;

- }

-

- if (req.request =="GET"&&req.url ~ "\.php($|\?)"){

- return (pass);

- }

- # if (req.restarts == 0) {

- if (req.http.x-forwarded-for) {

- set req.http.X-Forwarded-For =

- req.http.X-Forwarded-For + ", " + client.ip;

- } else {

- set req.http.X-Forwarded-For = client.ip;

- }

- # }

-

- if (req.request != "GET" &&

- req.request != "HEAD" &&

- req.request != "PUT" &&

- req.request != "POST" &&

- req.request != "TRACE" &&

- req.request != "OPTIONS" &&

- req.request != "DELETE") {

- return (pipe);

- }

-

- if (req.request != "GET" && req.request != "HEAD") {

- return (pass);

- }

- if (req.http.Authorization) {

- return (pass);

- }

- return (lookup);

- }

-

- sub vcl_hash {

- hash_data(req.url);

- if (req.http.host) {

- hash_data(req.http.host);

- } else {

- hash_data(server.ip);

- }

- return (hash);

- }

-

- sub vcl_hit {

- if (req.request == "PURGE") {

- set obj.ttl = 0s;

- error 200 "Purged";

- }

- return (deliver);

- }

- sub vcl_fetch {

- if (req.url ~ "\.(jpeg|jpg|png|gif|gz|tgz|bz2|tbz|mp3|ogg|ico|swf|flv|dmg|js|css|html|htm)$") {

- set beresp.ttl = 2d;

- set beresp.http.expires = beresp.ttl;

- set beresp.http.Cache-Control = "max-age=172800";

- unset beresp.http.set-cookie;

- }

- if (req.url ~ "\.(dmg|js|css|html|htm)$") {

- set beresp.do_gzip = true;

- }

- if (beresp.status == 503) {

- set beresp.saintmode = 15s;

- }

- }

- sub vcl_deliver {

- set resp.http.x-hits = obj.hits ;

- if (obj.hits > 0) {

- set resp.http.X-Cache = "HIT You!";

- } else {

- set resp.http.X-Cache = "MISS Me!";

- }

- }

以上就是配置文件!!!关于配置文件中各语句的功能请参考官方手册!

要注意的一点:必须要设置hosts解析,不然启动会出现如下错误:

[iyunv@varnish1 varnish]# service varnish restart

Stopping varnish HTTP accelerator: Starting varnish HTTP accelerator: Message from VCC-compiler:

Backend host '"www.opsren.com"' could not be resolved to an IP address:

Name or service not known

(Sorry if that error message is gibberish.)

('input' Line 4 Pos 9)

.host = "www.opsren.com";

--------################--

In backend specification starting at:

('input' Line 3 Pos 1)

backend opsren {

#######-----------

Running VCC-compiler failed, exit 1

VCL compilation failed

三、启动varnish

启动varnish(介绍两种方法)

第一种方法:

20服务器:

[iyunv@varnish1 varnish]# usr/local/varnish/sbin/varnishd -u www -g www -f /usr/local/varnish/etc/varnish/vcl.conf -a 192.168.8.20:80 -s file,/data/varnish/cache/varnish_cache.data,1G -w 1024,51200,10 -t 3600 -T 192.168.8.20:3000 &

加入开机启动

[iyunv@varnish1 varnish]# echo "/usr/local/varnish/sbin/varnishd -u www -g www -f /usr/local/varnish/etc/varnish/vcl.conf -a 192.168.8.20:80 -s file,/data/varnish/cache/varnish_cache.data,1G -w 1024,51200,10 -t 3600 -T 192.168.8.20:3000 &" >> /etc/rc.local

21服务器:

[iyunv@varnish2 varnish]# /usr/local/varnish/sbin/varnishd -u www -g www -f /usr/local/varnish/etc/varnish/vcl.conf -a 192.168.8.21:80 -s file,/data/varnish/cache/varnish_cache.data,1G -w 1024,51200,10 -t 3600 -T 192.168.8.21:3000 &

[iyunv@varnish2 varnish]# echo "/usr/local/varnish/sbin/varnishd -u www -g www -f /usr/local/varnish/etc/varnish/vcl.conf -a 192.168.8.21:80 -s file,/data/varnish/cache/varnish_cache.data,1G -w 1024,51200,10 -t 3600 -T 192.168.8.21:3000 &" >> /etc/rc.local

重要参数说明:

-u 指定运行用户

-g 指定运行组

-f 选项指定 varnishd 使用哪个配置文件

-a 指定 varnish 监听所有 ip 发给 80 的 http 请求

-s 选项用来确定 varnish 使用的存储类型和存储容量。1G表示指定大小为1G的缓存空间。也可以指定百分比,如 80%是指占用磁盘 80%的空间。

-w 这里指定了三个数据值,分别代表 最小,最大线程和超时时间

-T varnish管理地址和端口,主要用来清除缓存之用

-p client_http11=on 支持http1.1协议

-P(大P) /usr/local/varnish/var/varnish.pid 指定其进程码文件的位置,实现管理

启动日志,方便分析网站访问情况

[iyunv@varnish1 varnish]# /usr/local/varnish/bin/varnishncsa -w /data/varnish/logs/varnish.log &

[iyunv@varnish1 varnish]# echo "/usr/local/varnish/bin/varnishncsa -w /data/varnish/logs/varnish.log &" >> /etc/rc.local

参数: -w 指定varnish访问日志要写入的目录与文件

第二种方法:

我们也可以把Varnish添加到系统服务,方便日常操作!

[iyunv@varnish1 varnish]# cat /etc/init.d/varnish

- # varnish Control the varnish HTTP accelerator

- # chkconfig: - 90 10

- # description: Varnish is a high-perfomance HTTP accelerator

- # processname: varnishd

- # config: /usr/local/varnish/etc/varnish.conf

- # pidfile: /var/run/varnishd.pid

- ### BEGIN INIT INFO

- # Provides: varnish

- # Required-Start: $network $local_fs $remote_fs

- # Required-Stop: $network $local_fs $remote_fs

- # Should-Start: $syslog

- # Short-Description: start and stop varnishd

- # Description: Varnish is a high-perfomance HTTP accelerator

- ### END INIT INFO

- # Source function library.

-

- start() {

- echo -n "Starting varnish HTTP accelerator: "

- # Open files (usually 1024, which is way too small for varnish)

- ulimit -n ${NFILES:-131072}

-

- # Varnish wants to lock shared memory log in memory.

- ulimit -l ${MEMLOCK:-82000}

- usr/local/varnish/sbin/varnishd -u www -g www -f /usr/local/varnish/etc/varnish/vcl.conf -a 192.168.8.20:80 -s file,/data/varnish/cache/varnish_cache.data,1G -w 1024,51200,10 -t 3600 -T 192.168.8.20:3000 &

- sleep 15

- /usr/local/varnish/bin/varnishncsa -w /data/varnish/logs/varnish.log &

-

- }

-

- stop() {

- echo -n "Stopping varnish HTTP accelerator: "

- pkill -9 varnish

- }

-

- restart() {

- stop

- start

- }

-

- reload() {

- /etc/init.d/varnish_reload.sh

- }

-

- # See how we were called.

- case "$1" in

- start)

- start && exit 0

- ;;

- stop)

- stop || exit 0

- ;;

- restart)

- restart

- ;;

- reload)

- reload || exit 0

- ;;

- *)

- echo "Usage: $0 {start|stop|restart|reload}"

-

- exit 2

- esac

-

- exit $?

给予可执行权限

[iyunv@varnish1 varnish]# chmod +x /etc/init.d/varnish

添加到系统服务,开机自启动

[iyunv@varnish1 varnish]# chkconfig --add varnish

[iyunv@varnish1 varnish]# chkconfig varnish on

注意:发现从安装包中拷贝过来的脚本无法进行日志记录,这里是我自己定义的一个启动控制脚本!要是想使用安装包的启动控制脚本,可以这样做:

cp /root/varnish-3.0.2/redhat/varnish.initrc /etc/init.d/varnish

从安装包中复制过来的控制脚本必须要指定启动配置,配置文件实例如下:

[iyunv@varnish1 ~] vi /usr/local/varnish/etc/varnish.conf

- # Configuration file for varnish

- # /etc/init.d/varnish expects the variable $DAEMON_OPTS to be set from this

- # shell script fragment.

- # Maximum number of open files (for ulimit -n)

- NFILES=131072

- # Locked shared memory (for ulimit -l)

- # Default log size is 82MB + header

- MEMLOCK=1000000

- ## Alternative 2, Configuration with VCL

- DAEMON_OPTS="-a 192.168.8.20:80 \

- -f /usr/local/varnish/etc/varnish/vcl.conf \

- -T 192.168.9.20:3000 \

- -u www -g www \

- -n /data/varnish/cache \

- -s file,/data/varnish/cache/varnish_cache.data,1G"

用经过我修改的那脚本不用指定这个配置文件!

四、varnish平滑启动

Varnish 如果用/etc/init.d/varnish restart 重启的话,那么之前所有的缓存都会丢失,造成回源压力大,甚至源挂掉,如果我们更改了 VCL 配置,又需要生效,那么需要平滑重启。

[iyunv@varnish1 ~]# cat /etc/init.d/varnish_reload.sh

- #!/bin/bash

- #Reload a varnish config

- FILE="/usr/local/varnish/etc/varnish/vcl.conf"

- #Hostname and management port

- #(defined in /etc/default/varnish or on startup) HOSTPORT="IP:6082"

- NOW=`date +%s`

- BIN_DIR=/usr/local/varnish/bin

- error()

- {

- echo 1>&2 "Failed to reload $FILE."

- exit 1

- }

-

- $BIN_DIR/varnishadm -T $HOSTPORT vcl.load reload$NOW $FILE || error

- sleep 0.1

- $BIN_DIR/varnishadm -T $HOSTPORT vcl.use reload$NOW || error

- sleep 0.1

- echo Current configs:

- $BIN_DIR/varnishadm -T $HOSTPORT vcl.list

给予可执行权限

[iyunv@varnish1 ~]# chmod +x /etc/init.d/varnish_reload.sh

五、varnish日志切割

[iyunv@varnish1 ~]# vi /root/cut_varnish_log.sh

- #!/bin/bash

- logs_path=/data/varnish/logs

- date=$(date -d "yesterday" +"%Y-%m-%d")

- pkill -9 varnishncsa

- mkdir -p ${logs_path}/$(date -d "yesterday" +"%Y")/$(date -d "yesterday" +"%m")/

- mv /data/varnish/logs/varnish.log ${logs_path}/$(date -d "yesterday" +"%Y")/$(date -d "yesterday" +"%m")/varnish-${date}.log

- /usr/local/varnish/bin/varnishncsa -w /data/varnish/logs/varnish.log &

[iyunv@varnish1 ~]# chmod 755 /root/cut_varnish_log.sh

使用计划任务,每天晚上凌晨00点运行日志切割脚本:

[iyunv@varnish1 ~]# echo "0 0 * * * /root/cut_varnish_log.sh" >> /etc/crontab

六、针对varnish内核优化

[iyunv@varnish1 ~]# vi /etc/sysctl.conf

- net.ipv4.tcp_syncookies = 1

- net.ipv4.tcp_tw_reuse = 1

- net.ipv4.tcp_tw_recycle = 1

- #net.ipv4.tcp_fin_timeout = 30

- #net.ipv4.tcp_keepalive_time = 300

- net.ipv4.ip_local_port_range = 1024 65000

- net.ipv4.tcp_max_syn_backlog = 8192

- net.ipv4.tcp_max_tw_buckets = 5000

- net.ipv4.tcp_max_syn_backlog = 65536

- net.core.netdev_max_backlog = 32768

- net.core.somaxconn = 32768

- net.core.wmem_default = 8388608

- net.core.rmem_default = 8388608

- net.core.rmem_max = 16777216

- net.core.wmem_max = 16777216

- net.ipv4.tcp_timestamps = 0

- net.ipv4.tcp_synack_retries = 2

- net.ipv4.tcp_syn_retries = 2

- net.ipv4.tcp_tw_recycle = 1

- #net.ipv4.tcp_tw_len = 1

- net.ipv4.tcp_tw_reuse = 1

- net.ipv4.tcp_mem = 94500000 915000000 927000000

- net.ipv4.tcp_max_orphans = 3276800

[iyunv@varnish1 ~]# sysctl -p

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

清除所有缓存

/usr/local/varnish/bin/varnishadm -T 192.168.9.201:3000 url.purge *$

清除image目录下所有缓存

/usr/local/varnish/bin/varnishadm -T 192.168.9.201:3000 url.purge /image/

查看Varnish服务器连接数与命中率

/usr/local/varnish/bin/varnishstat –n /data/varnish/cache/varnish_cache.data

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

到此varnish集群以部署完成!!!!!!!!

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

第三部分:lnmp集群部署

要说明的两点:

1. 这里的web数据在通过后面要介绍的NFS挂载共享!

2. 数据库与web是分开在不同服务器上!

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

第四部分:mysql主从部署

mysql主从相对比较简单,不过多讲解!

1.主服务器和从服务器上安装的MySQL最好版本一致,从版本可以高于主.

mysql> select version();

+------------+

| version() |

+------------+

| 5.5.12-log |

+------------+

1 row in set (0.00 sec)

我这里选择5.5.12!

2.在主服务器上为从服务器设置一个连接账户

mysql> grant replication slave,replication client on *.* to rep@"192.168.8.41" identified by "rep";

3. 执行FLUSH TABLES WITH READ LOCK 进行锁表

mysql> FLUSH TABLES WITH READ LOCK;

4. 让客户程序保持运行,发出FLUSH TABLES语句让读锁定保持有效。(如果退出客户程序,锁被释放)。进入主服务器的数据目录,然后执行命令:

在主上操作:

shell> tar zcf /tmp/mysql.tgz /data/mysql/data

shell> scp /tmp/mysql.tgz 192.168.8.41:/tmp/

在从上操作:

shell> tar zxf /tmp/mysql.tgz /data/mysql/data

注意:对于主服务器没有数据时没必须以是3和4步骤!

读取主服务器上当前的二进制日志名(File)和偏移量值(Position),并记录下来:

mysql> SHOW MASTER STATUS;

+---------------+----------+--------------+------------------+

| File | Position | Binlog_Do_DB | Binlog_Ignore_DB |

+---------------+----------+--------------+------------------+

| binlog.000011 | 349 | | |

+---------------+----------+--------------+------------------+

1 row in set (0.03 sec)

取得快照并记录日志名和偏移量后(POS),可以在主服务器上重新启用写活动:

mysql> UNLOCK TABLES;

5. 确保主服务器主机上my.cnf文件的[mysqld]部分包括一个log_bin选项

[mysqld]

log_bin=mysql-bin

server-id=1

6. 停止用于从服务器的服务器并在其my.cnf文件中添加下面的行:

[mysqld]

replicate-ignore-db = mysql

replicate-ignore-db = test

replicate-ignore-db = information_schema

server-id=2

7.如果对主服务器的数据进行二进制备份,启动从服务器之前将它复制到从服务器的数据目录中。

确保对这些文件和目录的权限正确。服务器 MySQL运行的用户必须能够读写文件,如同在主服务器上一样。

8. 用--skip-slave-start选项启动从服务器,以便它不立即尝试连接主服务器。(可选操作)

9. 在从服务器上执行下面的语句:

mysql>change master to MASTER_HOST='192.168.8.40', MASTER_USER='rep', MASTER_PASSWORD='rep', MASTER_LOG_FILE='binlog.000011', MASTER_LOG_POS=349;

9. 启动从服务器线程:

mysql> START SLAVE;

10.验证部署是否成功

mysql> SHOW slave status \G

*************************** 1. row ***************************

Slave_IO_State: Waiting for master to send event

Master_Host: 192.168.8.40

Master_User: rep

Master_Port: 3306

Connect_Retry: 60

Master_Log_File: binlog.000011

Read_Master_Log_Pos: 349

Relay_Log_File: relaylog.000002

Relay_Log_Pos: 250

Relay_Master_Log_File: binlog.000011

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

Replicate_Do_DB:

Replicate_Ignore_DB: mysql,test,information_schema

Replicate_Do_Table:

Replicate_Ignore_Table:

Replicate_Wild_Do_Table:

Replicate_Wild_Ignore_Table:

Last_Errno: 0

Last_Error:

Skip_Counter: 0

Exec_Master_Log_Pos: 349

Relay_Log_Space: 399

Until_Condition: None

Until_Log_File:

Until_Log_Pos: 0

Master_SSL_Allowed: No

Master_SSL_CA_File:

Master_SSL_CA_Path:

Master_SSL_Cert:

Master_SSL_Cipher:

Master_SSL_Key:

Seconds_Behind_Master: 0

Master_SSL_Verify_Server_Cert: No

Last_IO_Errno: 0

Last_IO_Error:

Last_SQL_Errno: 0

Last_SQL_Error:

Replicate_Ignore_Server_Ids:

Master_Server_Id: 1

1 row in set (0.03 sec)

当Slave_IO_Running和Slave_SQL_Running都显示Yes的时候,表示同步成功。

到此mysql主从同步配置完成!!!!!!!下面开开始相对来说比较复杂的nfs高可用架构!

到时再有必要再添加主从切换部署说明。。。。。。。

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

第五部分:NFS高可用web存储部署

一.环境介绍

nfs1 eth0:192.168.8.60 eth1:192.168.125.60 ---作为主服务器

nfs2 eth0:192.168.8.61 eth1:192.168.125.61 ---作为从服务器

虚拟IP 192.168.8.62 ---通过Heartbeat来实现,对外提供服务的IP

两台服务器将 /dev/sda5 作为镜像

1.同步时钟(实践证明这个不同步关系不大,但是做下这步也无防)

[iyunv@nfs1 ~]# ntpdate ntp.api.bz

2.设置hosts相互解析

在 /etc/hosts 文件中加入如下内容:

192.168.8.60 nfs1

192.168.8.61 nfs2

二.drbd安装配置

1.drbd安装

源码安装:

[iyunv@nfs1 ~]# tar zxf drbd-8.3.5.tar.gz

[iyunv@nfs1 ~]# cd drbd-8.3.5

[iyunv@nfs1 ~]# make

[iyunv@nfs1 ~]# make install

yum 安装:

[iyunv@nfs1 ~]# yum -y install drbd83 kmod-drbd83

2.加载模块

[iyunv@nfs1 ~]# modprobe drbd

[iyunv@nfs1 ~]# lsmod |grep drbd

drbd 300440 0

3.drbd配置

[iyunv@nfs1 ~]# mv /etc/drbd.conf /etc/drbd.conf.bak

[iyunv@nfs1 ~]# vi /etc/drbd.conf

加入如下内容:

- global {

- usage-count yes;

- }

- common {

- syncer { rate 100M; }

- }

- resource r0 {

- protocol C;

- startup { wfc-timeout 0; degr-wfc-timeout 120; }

- disk { on-io-error detach; }

- net {

- timeout 60;

- connect-int 10;

- ping-int 10;

- max-buffers 2048;

- max-epoch-size 2048;

- }

- syncer { rate 30M; }

- on nfs1 {

- device /dev/drbd0;

- disk /dev/sda5;

- address 192.168.8.60:7788;

- meta-disk internal;

- }

- on nfs2 {

- device /dev/drbd0;

- disk /dev/sda5;

- address 192.168.8.61:7788;

- meta-disk internal;

- }

- }

4.创建资源

同于在我的实验环境中我之前的/dev/sda5在安装系统时创建的文件系统,因此这里要破坏文件系统(如果是新增的硬盘,此步可省略)。

[iyunv@nfs1 ~]# dd if=/dev/zero bs=1M count=1 of=/dev/sda5;sync;sync

1).创建一个名为ro的资源

[iyunv@nfs1 ~]# drbdadm create-md r0

--== Thank you for participating in the global usage survey ==--

The server's response is:

you are the 1724th user to install this version

Writing meta data...

initializing activity log

NOT initialized bitmap

New drbd meta data block successfully created.

success

2).启动drbd服务

[iyunv@nfs1 ~]# service drbd start

随系统开机系统

[iyunv@nfs1 ~]# chkconfig drbd on

以上操作同时在主备上操作!!!!!!!!!!!!!!!!!!!

启动好各节点drbd服务后,查看各节点的状态:

[iyunv@nfs1 ~]# cat /proc/drbd

version: 8.3.13 (api:88/proto:86-96)

GIT-hash: 83ca112086600faacab2f157bc5a9324f7bd7f77 build by mockbuild@builder10.centos.org, 2012-05-07 11:56:36

0: cs:Connected ro:Secondary/Secondary ds:Inconsistent/Inconsistent C r-----

ns:0 nr:0 dw:0 dr:0 al:0 bm:0 lo:0 pe:0 ua:0 ap:0 ep:1 wo:b oos:5236960

[iyunv@nfs2 ~]# cat /proc/drbd

version: 8.3.13 (api:88/proto:86-96)

GIT-hash: 83ca112086600faacab2f157bc5a9324f7bd7f77 build by mockbuild@builder10.centos.org, 2012-05-07 11:56:36

0: cs:Connected ro:Secondary/Secondary ds:Inconsistent/Inconsistent C r-----

ns:0 nr:0 dw:0 dr:0 al:0 bm:0 lo:0 pe:0 ua:0 ap:0 ep:1 wo:b oos:5236960

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

以下操作在nfs1主上操作

5.指定主节点

[iyunv@nfs1 ~]# drbdsetup /dev/drbd0 primary -o

[iyunv@nfs1 ~]# cat /proc/drbd

version: 8.3.13 (api:88/proto:86-96)

GIT-hash: 83ca112086600faacab2f157bc5a9324f7bd7f77 build by mockbuild@builder10.centos.org, 2012-05-07 11:56:36

0: cs:SyncSource ro:Primary/Secondary ds:UpToDate/Inconsistent C r-----

ns:170152 nr:0 dw:0 dr:173696 al:0 bm:9 lo:11 pe:69 ua:39 ap:0 ep:1 wo:b oos:5075552

[>....................] sync'ed: 3.2% (4956/5112)M

finish: 0:03:08 speed: 26,900 (26,900) K/sec

[iyunv@nfs2 ~]# cat /proc/drbd

version: 8.3.13 (api:88/proto:86-96)

GIT-hash: 83ca112086600faacab2f157bc5a9324f7bd7f77 build by mockbuild@builder10.centos.org, 2012-05-07 11:56:36

0: cs:SyncTarget ro:Secondary/Primary ds:Inconsistent/UpToDate C r-----

ns:0 nr:514560 dw:513664 dr:0 al:0 bm:31 lo:8 pe:708 ua:7 ap:0 ep:1 wo:b oos:4723296

[>...................] sync'ed: 9.9% (4612/5112)M

finish: 0:04:41 speed: 16,768 (19,024) want: 30,720 K/sec

可以看到主从之间正在传输数据,稍等片刻就会同步完成!

同步完成之后会是如下形式:

[iyunv@nfs1 ~]# cat /proc/drbd

version: 8.3.13 (api:88/proto:86-96)

GIT-hash: 83ca112086600faacab2f157bc5a9324f7bd7f77 build by mockbuild@builder10.centos.org, 2012-05-07 11:56:36

0: cs:Connected ro:Primary/Secondary ds:UpToDate/UpToDate C r---n-

ns:5451880 nr:0 dw:214920 dr:5237008 al:73 bm:320 lo:0 pe:0 ua:0 ap:0 ep:1 wo:b oos:0

[iyunv@nfs2 ~]# cat /proc/drbd

version: 8.3.13 (api:88/proto:86-96)

GIT-hash: 83ca112086600faacab2f157bc5a9324f7bd7f77 build by mockbuild@builder10.centos.org, 2012-05-07 11:56:36

0: cs:Connected ro:Secondary/Primary ds:UpToDate/UpToDate C r-----

ns:0 nr:5451880 dw:5451880 dr:0 al:0 bm:320 lo:0 pe:0 ua:0 ap:0 ep:1 wo:b oos:0

在主节点格式化 /dev/drbd0 分区(从节点不用)

[iyunv@nfs1 ~]# mkfs.ext3 /dev/drbd0

mke2fs 1.39 (29-May-2006)

Filesystem label=

OS type: Linux

Block size=4096 (log=2)

Fragment size=4096 (log=2)

655360 inodes, 1309240 blocks

65462 blocks (5.00%) reserved for the super user

First data block=0

Maximum filesystem blocks=1342177280

40 block groups

32768 blocks per group, 32768 fragments per group

16384 inodes per group

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912, 819200, 884736

Writing inode tables: done

Creating journal (32768 blocks): done

Writing superblocks and filesystem accounting information: done

This filesystem will be automatically checked every 37 mounts or

180 days, whichever comes first. Use tune2fs -c or -i to override.

在主节点上挂载分区(从节点不用)

[iyunv@nfs1 ~]# mkdir /data

[iyunv@nfs1 ~]# mount /dev/drbd0 /data

[iyunv@nfs1 ~]# mount |grep drbd

/dev/drbd0 on /data type ext3 (rw)

三.NFS配置(主从节点操作一样)

一般系统默认就安装好NFS服务

如果没有安装可以通过yum进行安装:yum -y install portmap nfs

1.修改 NFS 配置文件

[iyunv@nfs1 ~]# cat /etc/exports

/data *(rw,sync,insecure,no_root_squash,no_wdelay)

2.启动NFS

[iyunv@nfs1 ~]# service portmap start

Starting portmap: [ OK ]

[iyunv@nfs1 ~]# service nfs start

Starting NFS services: [ OK ]

Starting NFS quotas: [ OK ]

Starting NFS daemon: [ OK ]

Starting NFS mountd: [ OK ]

Starting RPC idmapd: [ OK ]

[iyunv@nfs1 ~]# chkconfig portmap on

[iyunv@nfs1 ~]# chkconfig nfs on

注意:要先启动portmap 再启动nfs!

四.heartbeat安装配置

1.heartbeat安装

源码安装:

tar zxf libnet-1.1.5.tar.gz

cd libnet-1.1.5

./configure

make;make install

tar jxf Heartbeat-2-1-STABLE-2.1.4.tar.bz2

cd Heartbeat-2-1-STABLE-2.1.4

./ConfigureMe configure

make;make install

yum安装:

[iyunv@nfs1 ~]# yum -y install libnet heartbeat-devel heartbeat-ldirectord heartbeat

这里比较奇怪:heartbeat这个包要yum两次!!!第一次貌似没有安装上

2.创建配置文件

[iyunv@nfs1 ~]# cd /etc/ha.d

创建主配置文件,主从之前有一处不同,文件中有说明

[iyunv@nfs1 ha.d]# vi ha.cf

加入如下内容:

- logfile /var/log/ha.log

- debugfile /var/log/ha-debug

- logfacility local0

- keepalive 2

- deadtime 10

- warntime 10

- initdead 10

- ucast eth1 192.168.52.61 #这里要指定对方从服务器eth1接口 IP,主从之间相互指定对方IP

- auto_failback off

- node nfs1

- node nfs2

创建hertbeat认证文件authkeys,主从配置相同!

[iyunv@nfs1 ha.d]# vi authkeys

加入如下内容:

auth 1

1 crc

权限给600

[iyunv@nfs1 ha.d]# chmod 600 /etc/ha.d/authkeys

创建集群资源文件 haresources,主从必须相同~!

[iyunv@nfs1 ha.d]# vi haresources

加入如下内容:

nfs1 IPaddr::192.168.8.62/24/eth0 drbddisk::r0 Filesystem::/dev/drbd0::/data::ext3 killnfsd

注意:这里的IPaddr 指定为虚拟IP的地址

3.创建kilnfsd脚本,主从相同!

这个脚本的功能就是重启nfs服务!这是因为NFS服务切换后,必须重新mount一下nfs共享出来的目录,否则会出现stale NFS file handle的错误!

[iyunv@nfs1 ha.d]# vi /etc/ha.d/resource.d/killnfsd

加入如下内容:

killall -9 nfsd; /etc/init.d/nfs restart; exit 0

[iyunv@nfs1 ha.d]# chmod 755 /etc/ha.d/resource.d/killnfsd

4.主从分别启动 nfs和heartbeat

[iyunv@nfs1 ha.d]# service heartbeat start

Starting High-Availability services:

2012/06/09_10:27:43 INFO: Resource is stopped

[ OK ]

[iyunv@nfs1 ha.d]# chkconfig heartbeat on

先启动主节点,再启动从节点!

整个环境运行OK后,首先来个简单测试(模拟主节点出现故障,导致服务停掉):

在做这个简单测试前看下当前的整个状态!

主节点:

[iyunv@nfs1 ha.d]# cat /proc/drbd

version: 8.3.13 (api:88/proto:86-96)

GIT-hash: 83ca112086600faacab2f157bc5a9324f7bd7f77 build by mockbuild@builder10.centos.org, 2012-05-07 11:56:36

0: cs:Connected ro:Primary/Secondary ds:UpToDate/UpToDate C r-----

ns:37912 nr:24 dw:37912 dr:219 al:12 bm:1 lo:0 pe:0 ua:0 ap:0 ep:1 wo:b oos:0

[iyunv@nfs1 ha.d]# mount |grep drbd0

/dev/drbd0 on /data type ext3 (rw)

[iyunv@nfs1 ha.d]# ls /data/

anaconda-ks.cfg install.log lost+found nohup.out sys_init.sh

init.sh install.log.syslog mongodb-linux-x86_64-2.0.5.tgz sedcU4gy2

[iyunv@nfs1 ha.d]# ip a |grep eth0:0

inet 192.168.8.62/24 brd 192.168.8.255 scope global secondary eth0:0

从节点:

[iyunv@nfs2 ha.d]# cat /proc/drbd

version: 8.3.13 (api:88/proto:86-96)

GIT-hash: 83ca112086600faacab2f157bc5a9324f7bd7f77 build by mockbuild@builder10.centos.org, 2012-05-07 11:56:36

0: cs:Connected ro:Secondary/Primary ds:UpToDate/UpToDate C r-----

ns:24 nr:37928 dw:37988 dr:144 al:1 bm:1 lo:0 pe:0 ua:0 ap:0 ep:1 wo:b oos:0

[iyunv@nfs2 ha.d]# service heartbeat status

heartbeat OK [pid 7323 et al] is running on nfs2 [nfs2]...

我们现在把主节点heartbeat服务停掉:

[iyunv@nfs1 ha.d]# service heartbeat stop

Stopping High-Availability services:

[ OK ]

我们再到从服务器上查看一下有没有抢到虚拟VIP

[iyunv@nfs2 ha.d]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast qlen 1000

link/ether 00:0c:29:fc:78:8f brd ff:ff:ff:ff:ff:ff

inet 192.168.8.61/24 brd 192.168.8.255 scope global eth0

inet 192.168.8.62/24 brd 192.168.8.255 scope global secondary eth0:0

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast qlen 1000

link/ether 00:0c:29:fc:78:99 brd ff:ff:ff:ff:ff:ff

inet 192.168.52.61/24 brd 192.168.52.255 scope global eth1

[iyunv@nfs2 ha.d]# mount |grep drbd0

/dev/drbd0 on /data type ext3 (rw)

[iyunv@nfs2 ha.d]# ll /data/

total 37752

-rw------- 1 root root 1024 Jun 15 10:56 anaconda-ks.cfg

-rwxr-xr-x 1 root root 4535 Jun 15 10:56 init.sh

-rw-r--r-- 1 root root 30393 Jun 15 10:56 install.log

-rw-r--r-- 1 root root 4069 Jun 15 10:56 install.log.syslog

drwx------ 2 root root 16384 Jun 15 09:41 lost+found

-rw-r--r-- 1 root root 38527793 Jun 15 10:56 mongodb-linux-x86_64-2.0.5.tgz

-rw------- 1 root root 2189 Jun 15 10:56 nohup.out

-rw-r--r-- 1 root root 101 Jun 15 10:56 sedcU4gy2

-rw-r--r-- 1 root root 4714 Jun 15 10:56 sys_init.sh

可以看虚拟VIP已经切换过来,同时NFS也自己挂载上,数据也OK!!

发现整个主从节点之间的切换速度还是非常快的,大概在3秒左右!!

如果主节点由于硬件损坏,需要将Secondary提生成Primay主机,处理方法如下:

在primaty主机上,先要卸载掉DRBD设备.

[iyunv@nfs1 /]# umount /dev/drbd0

将主机降级为”备机”

[iyunv@nfs1 /]# drbdadm secondary r0

[iyunv@nfs1 /]# cat /proc/drbd

1: cs:Connected st:Secondary/Secondary ds:UpToDate/UpToDate C r—

.......略

.......略

现在,两台主机都是”备机”.

在备机nfs2上, 将它升级为”主机”.

[iyunv@nfs2 /]# drbdadm primary r0

[iyunv@nfs2 /]# cat /proc/drbd

1: cs:Connected st:Primary/Secondary ds:UpToDate/UpToDate C r—

.......略

.......略

现在nfs2成为主机了.

当主节点状态变成 primary/unknow 从节点此时是 secondary/unknow 时,可以采用以下步骤进行解决:

1.从节点操作: drbdadm -- --discard-my-data connect all

2.主节点操作: drbdadm connnect all

基本以上两步就OK了!

至于drbd出现脑裂可以通过相应脚本,也可以手动恢复,但是推荐手动恢复!一般出现这种问题的机率是比较低的!

手动恢复脑裂问题:

在secondary上:

drbdadm secondary r0

drbdadm disconnect all

drbdadmin -- --discard-my-data connect r0

在primary上:

drbdadm disconnect all

drbdadm connect r0

但是网上说在drbd.conf配置文件中加入以下参数,能解决split brain(脑裂)问题!此时主从之间是双向同步。。。

net {

after-sb-0pri discard-older-primary;

after-sb-lpri call-pri-lost-after-sb;

after-sb-2pri call-pri-lost-after-sb;

}

|

|